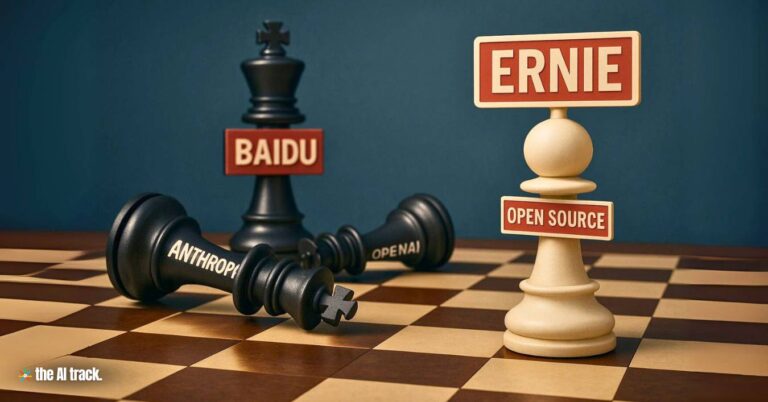

Baidu has open-sourced its ERNIE 4.5 multimodal AI model family under the Apache 2.0 license, comprising 10 model variants ranging from 0.3B to 424B total parameters. With a novel Mixture-of-Experts (MoE) architecture, strong benchmark results, and cost-efficient performance, ERNIE 4.5 represents China’s most assertive move in the open-source AI race, challenging Western players and accelerating the shift in global AI economics.

Baidu Open-Sources Ernie 4.5 – Key Points

Baidu Open-Sources Ernie 4.5 Family (June 30–July 1, 2025):

Baidu launched the ERNIE 4.5 model family with a gradual rollout starting June 30, 2025. The release includes 10 multimodal models (ranging from 0.3B dense to 424B MoE total parameters), with active experts at 3B and 47B. All models are released under the Apache 2.0 license, marking China’s largest open-source LLM family to date.

Strategic Pivot from Proprietary to Open Source:

Baidu’s decision marks a fundamental shift from its historically proprietary stance. The move was triggered by the success of DeepSeek and broader market pressures, as acknowledged by CEO Robin Li. Industry analyst Lian Jye Su noted this pivot reflects a realization that open-source approaches can match, or outperform, closed systems.

Heterogeneous MoE with Modality Isolation and Benchmark Leadership:

ERNIE 4.5 uses a heterogeneous MoE architecture with modality-isolated routing, router orthogonal loss, and token-balanced loss. This enables effective cross-modal learning without interference. The 300B-A47B model outperforms DeepSeek-V3-671B-A37B on 22 of 28 benchmarks, including IFEval, Multi-IF, SimpleQA, and ChineseSimpleQA.

“Thinking Mode” in Vision-Language Models:

ERNIE-VL models support both “thinking” and “non-thinking” modes. The thinking mode boosts reasoning tasks like MathVista, VisualPuzzle, and MMMU, while non-thinking mode is optimized for document, chart, and perception-based benchmarks. Even ERNIE-VL-28B-A3B rivals Qwen2.5-VL-32B using fewer resources.

Cost Efficiency and Market Disruption:

ERNIE-4.5-21B-A3B outperforms Qwen3-30B-A3B on math and reasoning benchmarks, despite having ~30% fewer parameters. Baidu claims its ERNIE X1 model performs on par with DeepSeek R1 at half the cost. Alec Strasmore (Epic Loot) called this a “declaration of war on pricing,” comparing Baidu’s strategy to Costco’s disruption of premium brands.

Training Efficiency and Deployment Stack:

Baidu’s training infrastructure achieves 47% Model FLOPs Utilization (MFU) using hybrid parallelism, FP8 training, speculative decoding, quantization (2-bit/4-bit), and PD disaggregation. Models are built with the PaddlePaddle framework and supported by:

- ERNIEKit: Offers industrial-grade fine-tuning (SFT, LoRA, DPO, QAT, PTQ).

- FastDeploy: Provides OpenAI-compatible inference APIs for scalable multi-machine deployments.

Global Economic Shift Toward Open AI Models:

According to a Linux Foundation report, 89% of AI-using organizations have adopted open-source models, with two-thirds citing lower costs. DeepSeek claims up to 97% reduction in development cost. Sam Altman (OpenAI) admitted OpenAI needs a new open-source strategy. These shifts illustrate how open models are pressuring legacy closed systems and redefining cost structures.

Geopolitical and Strategic Context:

Baidu’s evolution into an AI powerhouse aligns with China’s 2030 national AI strategy. The company leverages over 731 million domestic users for training data, and integrates AI into state-backed initiatives like Apollo and the Belt and Road project. These are not just technological plays but strategic moves for global influence.

Skepticism and Trust Challenges:

Despite the technical achievements, concerns about transparency, security, and ethical training data persist. Sean Ren (USC) and Cliff Jurkiewicz (Phenom) warn that open weights do not ensure responsible AI. Jurkiewicz compared the open-source model experience to Android, highly flexible, but challenging to manage at scale and potentially risky in enterprise settings.

Unsustainable Industry Dynamics and Market Saturation:

Robin Li (Baidu CEO) has warned about AI market oversaturation in China. Many firms struggle to monetize due to intense competition, despite advanced capabilities. Globally, proprietary players like OpenAI and Anthropic now face the “innovator’s dilemma”: defend premium pricing while rivals offer similar or better performance for free.

Why This Matters:

The open-sourcing of ERNIE 4.5 marks a major inflection point in global AI competition. Baidu’s transition from closed to open AI reflects a broader industrial trend driven by cost, scalability, and strategic power. ERNIE 4.5 not only raises the technical standard for multimodal models but also forces a reassessment of economic models across the AI sector. The release compresses innovation cycles, widens access, and sparks global debates around trust, control, and the geopolitics of foundational AI.

Baidu launches new AI models, Ernie 4.5 and X1 undercut OpenAI/DeepSeek with 1% pricing and free chatbot, leveraging China’s full-stack AI infrastructure.

Read a comprehensive monthly roundup of the latest AI news!