Key Takeaway:

India’s Ministry of Electronics and Information Technology (MeitY) has proposed amendments to the IT Rules, 2021, requiring mandatory AI Labelling of AI-generated content and stricter oversight on content removal, placing responsibility on major platforms such as OpenAI, Meta, X, Google, and YouTube. The rules aim to combat the growing misuse of deepfakes, protect citizens from misinformation, and increase accountability across government and tech firms.

India Proposes Strict AI Labelling Rules – Key Points

Mandatory AI Labelling Standard (10% Coverage Rule):

Draft amendments mandate that AI-generated visuals display labels covering at least 10% of the screen, while synthetic audio must carry labels during the first 10% of playback. Labels must be permanent or embedded with unique metadata or identifiers. This is positioned as one of the world’s first measurable standards for visibility and reflects India’s push to strengthen AI labelling practices.

Source: Draft amendments to India’s IT Rules, 2021, published October 22, 2025.

Definition of “Synthetic Information”:

The amendments define synthetically generated content as material “artificially or algorithmically created, generated, modified, or altered using a computer resource in a manner that appears reasonably authentic or true.” This definition underpins the broader AI labelling framework and ensures all formats, from video to text, are covered.

User Declarations and Metadata Traceability:

Social media platforms with more than 5 million users must obtain explicit declarations from uploaders confirming whether content is AI-generated. They must deploy “reasonable and proportionate” technical measures to verify declarations, including automated tools, and ensure that metadata identifiers trace content authenticity. If platforms fail to implement these AI labelling requirements, they risk losing “safe harbour” immunity from liability over third-party content.

Content Removal and Oversight:

Only senior government officials (Joint Secretary rank or above) or Deputy Inspector Generals in police forces may issue takedown orders, which must specify a clear legal basis. Orders will undergo monthly review by a Secretary-level officer to ensure necessity and proportionality. This is intended to enhance government accountability and limit arbitrary censorship.

Context of Rising Deepfake Cases:

India, with nearly 1 billion internet users across diverse ethnic and religious groups, is especially vulnerable to misinformation. Deepfake misuse has become a flashpoint:

- In 2023, a viral deepfake of actor Rashmika Mandanna entering an elevator sparked national debate and led Prime Minister Narendra Modi to call deepfakes a new “crisis.”

- Bollywood actors including Amitabh Bachchan, Aishwarya Rai Bachchan, Akshay Kumar, and Hrithik Roshan have filed lawsuits to protect their likenesses and “personality rights” from AI misuse.

- A film studio altered the ending of Raanjhanaa using AI without the consent of the director or actors, highlighting gaps in India’s legal protections.

Global Alignment:

India’s moves mirror and extend regulatory approaches elsewhere:

- The European Union’s AI Act requires synthetic media to carry detectable, machine-readable labels for text, images, audio, or video.

- China’s 2025 rules mandate visible AI symbols for chatbots, synthetic voices, and immersive content, supplemented with hidden watermarks.

- Denmark is considering legislation giving citizens copyright over their own likeness to request removal of AI-generated images or videos created without consent.

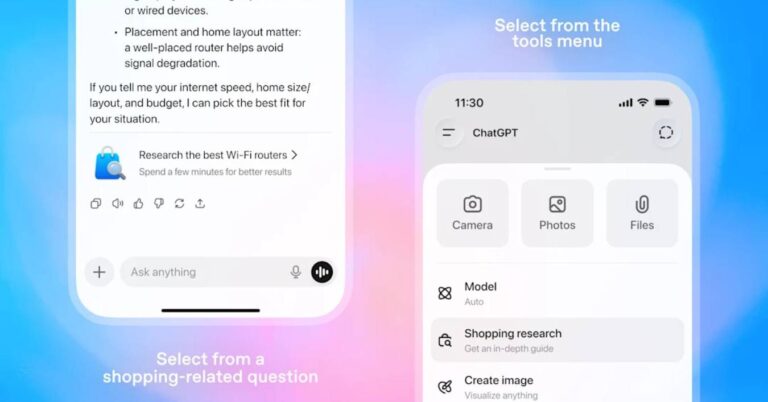

Industry Practices and Patchy Enforcement:

Companies like Meta, Google, YouTube, and Adobe already apply some AI labels, but enforcement is inconsistent.

Instagram sometimes displays “AI Info” tags, though many AI-generated posts slip through.

YouTube applies labels such as “Altered or synthetic content” with explanatory notes.

Meta has worked with the Partnership on AI (PAI) to develop shared standards and invisible watermarking tools, in collaboration with OpenAI, Microsoft, Midjourney, and Shutterstock.

India’s proposals go further by obligating proactive verification and visible AI labelling, not just reactive tagging.

Industry Impact:

India is a critical growth market: OpenAI CEO Sam Altman noted in February 2025 that India is its second-largest user base, with numbers tripling over the past year. Compliance will require large-scale technical adaptation by AI firms and social platforms.

Public Consultation:

Feedback on the proposed amendments is open to citizens and industry stakeholders until November 6, 2025, offering an opportunity to shape final provisions.

Why This Matters:

India is establishing one of the strictest global frameworks for AI labelling, ensuring visible markers on synthetic content while embedding government accountability and platform responsibility. These measures aim to safeguard democratic processes, reduce the risks of misinformation, and provide a model for other nations grappling with deepfake technologies.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

AI disinformation detection tools offer a powerful defense against fake news. This article explores 10 free tools that help fact-check, verify, and fight misinformation.

Microsoft announces a $3 billion investment in India to expand AI and cloud services, focusing on local talent and tech innovation.

Read a comprehensive monthly roundup of the latest AI news!