Key Takeaway

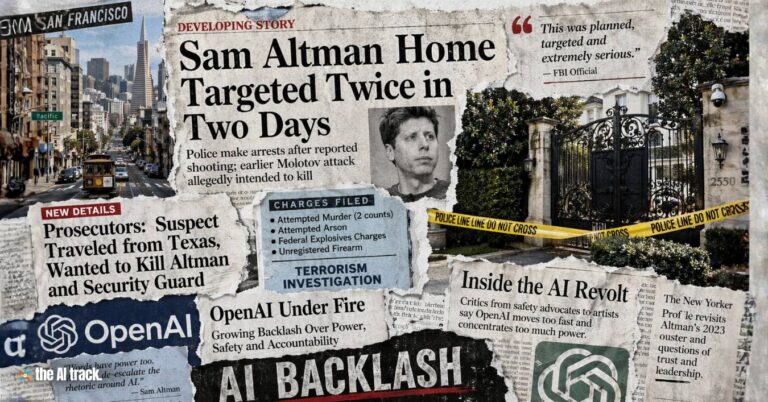

OpenAI signs Pentagon AI deal to deploy advanced AI systems in classified US military environments with contract-backed guardrails, as the Trump administration moved to phase out Anthropic across federal agencies and the Pentagon labeled Anthropic a supply chain risk.

OpenAI Signs Pentagon AI Deal – Key Points

The Story

OpenAI signs Pentagon AI deal to deploy AI models in classified systems with guardrails against domestic mass surveillance and autonomous weapons, while President Donald Trump ordered federal agencies to stop using Anthropic and phase it out over six months.

OpenAI later published details saying the deployment will be cloud-only, with OpenAI retaining control of its safety stack and keeping cleared personnel in the loop, and that its agreement includes three redlines including a ban on high-stakes automated decisions.

Defense Secretary Pete Hegseth said Anthropic would be “immediately” designated a supply chain risk and barred military-linked contractors from any commercial activity with Anthropic. Anthropic said it will challenge any supply chain risk designation in court and refused to lift restrictions tied to surveillance and fully autonomous weapons.

The Facts

OpenAI signs Pentagon AI deal for classified systems

OpenAI said it reached an agreement “yesterday” to deploy advanced AI systems in classified environments, and said it asked the government to make the same terms available to all AI companies. Sam Altman has also said OpenAI will send forward-deployed engineers to help ensure model safety.

OpenAI defined three redlines for national security deployments

OpenAI listed three redlines: no use for mass domestic surveillance; no use to direct autonomous weapons systems; and no use for high-stakes automated decisions (citing examples such as “social credit”).

Cloud-only deployment and OpenAI-run safety stack

OpenAI said the deal is cloud-only and will not use “guardrails off” or non-safety-trained models, and will not deploy on edge devices, citing edge deployment as a pathway that could enable autonomous lethal weapons use. OpenAI said its architecture enables independent verification that redlines are not crossed, including running and updating classifiers.

Contract language ties “lawful purposes” to oversight and human control

OpenAI published contract language stating the Department of War may use the AI system for lawful purposes consistent with law, operational requirements, and established safety and oversight protocols, while also stating the system will not be used to independently direct autonomous weapons where law, regulation, or policy requires human control, and will not assume other high-stakes decisions requiring human approval.

OpenAI referenced existing DoD policy on autonomous and semi-autonomous systems

The published contract excerpt cited DoD Directive 3000.09 (dated 25 January 2023) describing requirements for verification, validation, and testing of AI in autonomous and semi-autonomous systems before deployment in realistic environments.

Contract language restricts domestic surveillance and domestic law-enforcement use

OpenAI published language stating intelligence handling of private information must comply with the Fourth Amendment and cited laws and policies including the National Security Act of 1947, the Foreign Intelligence and Surveillance Act of 1978, Executive Order 12333, and applicable DoD directives requiring a defined foreign intelligence purpose. It also stated the AI system shall not be used for unconstrained monitoring of US persons’ private information, and shall not be used for domestic law-enforcement activities except as permitted by the Posse Comitatus Act and other applicable law.

Cleared OpenAI personnel “in the loop”

OpenAI said cleared forward-deployed engineers will help the government, with cleared safety and alignment researchers in the loop.

OpenAI said it opposed designating Anthropic a supply chain risk

OpenAI stated it does not think Anthropic should be designated as a supply chain risk and said it has made that position clear to the government.

OpenAI said its guardrails are designed to be enforceable even if laws change

OpenAI said the contract references surveillance and autonomous-weapons laws and policies “as they exist today,” and claimed that even if those standards change later, use of its systems must still remain aligned with the current standards reflected in the agreement.

OpenAI’s approach relies on cloud-only deployment, OpenAI-controlled safety stack, and cleared personnel involvement; how these controls are audited or enforced in practice is not detailed beyond OpenAI’s description and contract excerpts.

Federal ban on Anthropic products with a six-month phaseout

President Trump said on Truth Social he is directing every federal agency to immediately cease all use of Anthropic’s technology and phase it out over the next six months.

Hegseth said Anthropic would be “immediately” designated a supply chain risk and stated: “Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.”

Anthropic refusal and court challenge

Anthropic said it will challenge any supply chain risk designation in court and said it would not change its position on mass domestic surveillance or fully autonomous weapons.

Timeline / What Changed

Tuesday: Hegseth called Amodei to Washington, DC for a meeting as tensions escalated.

Thursday: Amodei said Anthropic would rather stop working with the Pentagon than accept demands tied to mass surveillance and fully autonomous weapons.

Friday: Trump ordered an immediate halt to Anthropic use across federal agencies with a six-month phaseout; Hegseth announced an immediate supply chain risk designation with contractor restrictions; Altman confirmed OpenAI’s Pentagon deal for classified systems with guardrails.

Saturday: OpenAI published a detailed description of its agreement, including three redlines, a cloud-only deployment design, and excerpts of contract language tied to surveillance and weapons policy.

OpenAI said its agreement has more guardrails than any previous classified AI deployment agreement, including Anthropic’s, but the comparison is not substantiated with third-party validation in the provided text.

The Aftermath

With $110B in fresh capital, multi-gigawatt Nvidia compute commitments, and nearly 900 million weekly users, OpenAI now operates at infrastructure scale comparable to major global platforms, raising the stakes of how its defense partnerships are perceived. Yet a Community Note attached to Sam Altman’s public post said officials described the models as usable for “all lawful purposes,” introducing ambiguity around the scope of safeguards.

The reaction was immediate. Hashtags such as #CancelChatGPT trended as users shared account cancellations, while Anthropic’s Claude climbed to No. 2 on Apple’s U.S. free app rankings, up from No. 131 on January 30, 2026, positioned between ChatGPT at No. 1 and Google Gemini at No. 3. The episode illustrates how defense contracts and AI governance debates can rapidly influence consumer behavior and competitive dynamics.

Why This Matters

When OpenAI signs Pentagon AI deal while simultaneously securing $110B in capital and multi-gigawatt compute commitments, it signals more than a U.S. procurement decision. It reflects the integration of frontier AI systems across national institutions and politically sensitive domains such as defense and security carries global implications.

At this scale, governance language, deployment safeguards, and supply-chain alignment influence not only one country’s defense ecosystem but also international regulatory debates, alliance structures, and cross-border technology dependencies. Decisions made in Washington reverberate across Europe, Asia, and emerging markets where governments are defining their own AI sovereignty strategies.

As OpenAI signs Pentagon AI deal at near-platform scale, the intersection of AI infrastructure, geopolitical alignment, and public trust becomes a defining factor in global competition — shaping how states, enterprises, and citizens evaluate who controls the next layer of digital power.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Militaries worldwide are aggressively adopting artificial intelligence across diverse functions to gain strategic advantages. But managing risks and ethical challenges will be critical. An in-depth analysis on the subject.

This article highlights the critical influence of AI in politics, illuminating its game-changing effects on elections, campaign strategies, and governance.

Discover how the ongoing Ukraine-Russia conflict ushers in a new era of warfare with the extensive use of consumer drones.

The Israel Defense Forces (IDF) have integrated artificial intelligence (AI) into their military tactics, revolutionizing their operations.

Read a comprehensive monthly roundup of the latest AI news!