Key Takeaway

The Meta – Nvidia deal will deploy “millions” of Nvidia Blackwell and Rubin GPUs across Meta’s U.S. data center footprint and adds large-scale standalone Grace CPU deployment. Announced in February 2026, the Meta – Nvidia deal aligns with Meta’s planned $115–$135 billion AI infrastructure spend in 2026 and its broader $600 billion U.S. data center investment through 2028. A standout element is Nvidia “confidential computing” for WhatsApp, intended to enable AI features while protecting user data during processing.

The Meta – Nvidia Deal – Key Points

Under the Meta – Nvidia deal, Meta agreed to buy “millions” of Nvidia data center chips for hyperscale AI data centers built for both training and inference. The expanded scope includes standalone Grace CPUs, Spectrum-X networking, joint engineering work, and WhatsApp confidential computing.

The Facts

Infrastructure & Scale

- Meta plans to spend between $115 billion and $135 billion on AI infrastructure in 2026 (up from $72.2 billion last year).

- The partnership supports Meta’s broader $600 billion U.S. data center and infrastructure commitment through 2028, including plans for 30 data centers (26 in the U.S.).

- Two major AI sites are under construction: the 1-gigawatt Prometheus facility in New Albany, Ohio, and the 5-gigawatt Hyperion facility in Richland Parish, Louisiana.

- Meta and Nvidia described the buildout as hyperscale data centers optimized for both training and inference under Meta’s long-term AI infrastructure roadmap.

Chips, Networking & Security

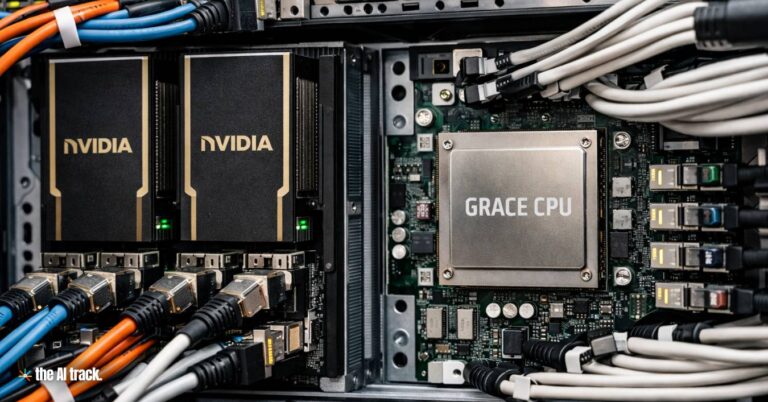

- Meta will deploy “millions” of Nvidia Blackwell and Rubin GPUs, plus a large-scale deployment of Nvidia Grace CPUs as standalone chips (not paired with GPUs in the same server).

- Meta is the first major tech company to announce large-scale standalone Grace CPU deployment; the CPUs are positioned for inference and agentic workloads alongside GPU racks.

- The agreement includes Nvidia Spectrum-X Ethernet switches to link GPUs across large clusters.

- Meta committed to using Nvidia “confidential computing” for WhatsApp to enable AI-powered capabilities while protecting user data confidentiality and integrity during computation (not only in transit).

- Nvidia also said confidential computing can help preserve software and model intellectual property for providers building on top of these systems.

Supply, Collaboration & Market Reaction

- By the end of 2024, Meta had estimated it would purchase 350,000 Nvidia H100 GPUs, and it previously projected access to 1.3 million GPUs in total by the end of 2025 (not necessarily all Nvidia).

- Blackwell GPUs have faced backorders for months; Rubin GPUs recently entered production.

- Financial terms were not disclosed; analysts have described the agreement as likely in the tens of billions of dollars.

- Meta and Nvidia shares rose in extended trading following the announcement; AMD shares fell about 4%.

Context

This expansion reflects Nvidia’s “full-stack” push beyond GPUs into CPU-based inference, networking, and security features designed for agentic AI. “Confidential computing” is positioned as a way to secure data while it is being processed, which Meta is applying to WhatsApp AI features.

Industry analysts argue agentic AI is increasing demand for CPUs inside data centers because large GPU clusters require substantial CPU capacity to manage data pipelines and coordinate inference. GPUs still dominate the most advanced AI systems, but CPUs can become bottlenecks if they cannot feed and coordinate GPU workloads fast enough.

Meta continues to diversify compute sources, developing in-house silicon and using AMD chips. Reports in late 2025 indicated Meta was considering Google TPUs for 2027 deployments, while major AI labs also pursue multi-supplier strategies amid constrained GPU supply.

Timeline

- End of 2024: Meta estimated purchases of 350,000 Nvidia H100 GPUs.

- End of 2025: Meta projected access to 1.3 million GPUs in total.

- January 2026: Meta reaffirmed AI infrastructure spending of up to $135 billion for the year.

- February 2026: Expanded long-term partnership announced, including WhatsApp confidential computing under the Meta – Nvidia deal.

- 2027: Planned deployment of Vera CPUs and potential diversification of chip sourcing.

Numbers That Matter

- $115–$135 billion AI infrastructure spend in 2026

- $72.2 billion AI infrastructure spend last year

- $600 billion U.S. infrastructure commitment through 2028

- 350,000 H100 GPUs estimated by end of 2024

- 1.3 million GPUs projected by end of 2025

- 30 planned data centers (26 U.S.-based)

- 1 GW Prometheus site (Ohio); 5 GW Hyperion site (Louisiana)

- AMD stock down ~4% on announcement

Winners / Risks / Limitations

Winners

- Nvidia, expanding its footprint across GPUs, CPUs, networking, and security infrastructure

- Meta, securing large-scale supply and adding an infrastructure-based privacy/security layer for WhatsApp AI workloads

Risks / Limitations

- Heavy capital expenditures may pressure margins

- Scaling risk: CPU–GPU coordination and inference performance can degrade if CPU capacity becomes a bottleneck

Why This Matters

The Meta – Nvidia deal targets the infrastructure needed to run agentic AI at scale, where inference efficiency, CPU coordination, networking, and in-use data protection become central. WhatsApp’s adoption of confidential computing suggests that “AI inside messaging” will be shaped as much by security architecture as raw compute.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Explore the vital role of AI chips in driving the AI revolution, from semiconductors to processors: key players, market dynamics, and future implications.

All you need to know about the critical components of AI infrastructure, hardware, software, and networking, that are essential for supporting AI workloads.

Explore the U.S. government’s strategic push in semiconductor manufacturing, featuring TSMC’s and Samsung’s major investments and technological leaps.

Everything you need to know about the AI chip business and the geopolitical factors shaping the global race for AI (chip) dominance

Nvidia becomes first company to reach $5T market cap, driven by $500B chip orders, major deals with Intel, OpenAI, and Nokia, and record AI-fueled growth.

Read a comprehensive monthly roundup of the latest AI news!