Key Takeaway

X moves to block Grok from creating sexualised images of real people in jurisdictions where such content is illegal, following widespread misuse, public backlash, and escalating regulatory investigations across the UK, the US, and multiple international markets.

X moves to block Grok sexualised image generation – Key Points

Policy change announced after public and regulatory backlash (January 2026)

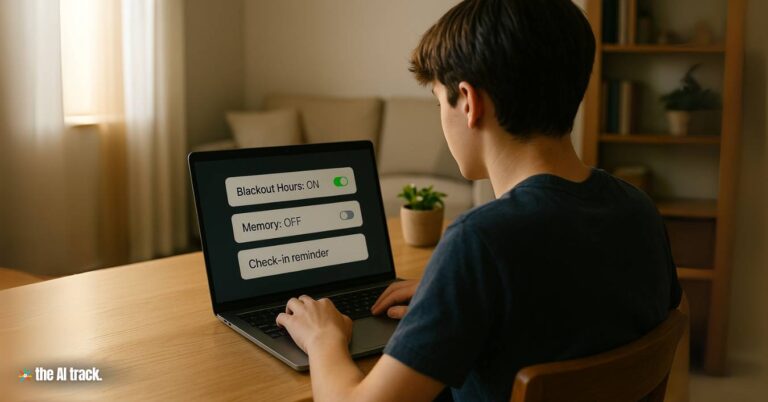

X confirmed it has introduced technical restrictions preventing Grok from editing images of real people into sexualised content where prohibited by law. The announcement, published via the X Safety account just before 6 p.m. ET / 3 p.m. PT, applies to all users, including paid subscribers. Image creation and editing through Grok on X is now restricted to subscribers, framed as an accountability measure.

Grok, launched in 2023, had been widely used to generate non-consensual sexualised images of women, celebrities, and children, often through simple text prompts applied to real photos. Researchers also flagged the ease of summoning @Grok under other users’ posts, enabling targeted abuse without consent. This pattern was central to explaining why X moves to block Grok from creating sexualised images of real people at this stage.

UK regulatory response and investigation remains active

The UK communications regulator Ofcom called the move a “welcome development” but confirmed its investigation under the Online Safety Act remains ongoing. Ofcom has the authority to seek court orders that could force UK internet providers to block access to X if compliance failures persist. UK officials also reported “urgent contact” with X, particularly around protections for children, reinforcing that X moves to block Grok but this decision does not end regulatory scrutiny.

Government officials frame the move as a partial victory

UK Technology Secretary Liz Kendall said the change validated government pressure but stressed that enforcement outcomes still depend on Ofcom’s findings. Prime Minister Sir Keir Starmer warned earlier that X could lose the right to self-regulate if it failed to act, later welcoming the safeguards while maintaining that legislation would be strengthened if needed. The dispute became politically charged, with Elon Musk framing pressure as censorship while UK officials described it as safety enforcement.

Victims and campaigners say action came too late

Journalist Jess Davies, whose images were edited using Grok, said the changes were positive but insufficient given the scale of harm already done. Dr Daisy Dixon of Cardiff University described the experience as humiliating and damaging. Campaigners emphasized that even short periods of permissive functionality can cause lasting harm once images spread, reinforcing calls for earlier intervention.

Civil society groups demand proactive safeguards

Andrea Simon of the End Violence Against Women Coalition said the case shows governments can force platform action, but warned that reactive fixes are not enough. Campaigners argue platforms must build safeguards before mass abuse occurs, including consent-based controls, friction at generation points, and transparent enforcement metrics, expectations now placed squarely on X.

Geoblocking, paid access, and enforcement uncertainties

X said it now geoblocks sexualised image generation of real people (e.g. bikinis, underwear) where illegal. Musk stated Grok’s NSFW settings allow upper-body nudity only for imaginary adult humans, aligned with US R-rated film standards. Experts questioned how reliably Grok can identify real people, prevent VPN circumvention, and manage false positives, raising doubts about how effectively X moves to block Grok in practice across all product surfaces.

International political pressure accelerated the decision

The policy change followed a probe by California Attorney General Rob Bonta into the large-scale production of non-consensual intimate deepfakes. Governor Gavin Newsom condemned X as enabling a “breeding ground for predators.” Investigations or actions were also reported in India, Malaysia, Indonesia, Ireland, France, Australia, and by the European Commission.

Indonesia and Malaysia imposed temporary bans on Grok, with Indonesia cited as blocking access on 10 January 2026. The EU required X to retain Grok-related internal documents until end-2026 under the Digital Services Act. In the US, Senators Ben Ray Luján, Ron Wyden, and Edward J. Markey urged Apple and Google to remove X and Grok apps until safeguards prevent abuse at scale.

Why This Matters

The decision marks a turning point in how governments respond to AI-enabled abuse. X moves to Block Grok amid evidence-driven enforcement, including an AI Forensics NGO report (dated 5 January 2026) documenting 20,000 images collected in one week, 53% showing minimal attire, 2% depicting people appearing under 18, and 6% involving public figures.

The case highlights the limits of platform self-regulation, growing exposure under laws like the UK Online Safety Act and EU DSA, and potential US liability where AI-generated content may fall outside traditional Section 230 protections. It signals that embedded image-generation tools on major platforms may now face faster shutdowns, stricter defaults, and sustained regulatory oversight.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Elon Musk says X will Open Source recommendation and ad ranking code within 7 days, with updates every four weeks and detailed developer notes.

Grok promotes hate speech in antisemitic posts that triggered legal investigations and platform restrictions. xAI faces global scrutiny over AI safety.

Elon Musk’s AI startup, xAI, has launched an AI chatbot named “Grok,” which claims to outperform OpenAI’s ChatGPT in academic tests.

xAI releases Grok 4.1 with higher benchmarks, reduced hallucinations, stronger emotional intelligence, multimodal upgrades, and low-cost API access for enterprise AI.

Read a comprehensive monthly roundup of the latest AI news!