Key Takeaway:

Google has fully integrated Gemini AI into Google Maps, adding conversational, multimodal, and proactive capabilities that turn navigation into a hands-free, context-aware experience. The rollout begins November 2025 on Android and iOS, with Android Auto support following.

Google Maps Integrates Gemini AI – Key Points

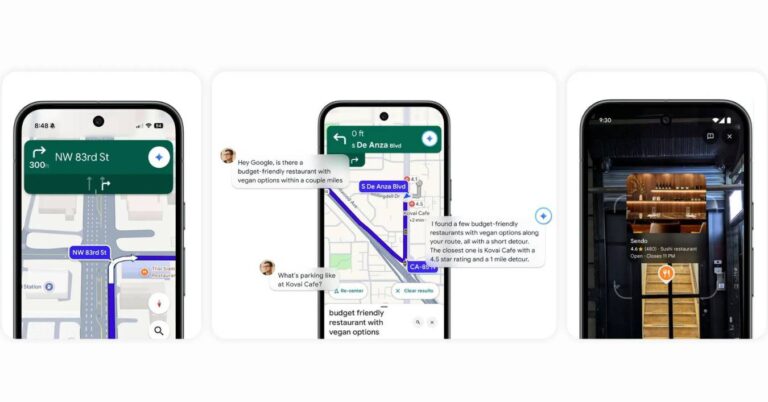

Conversational Gemini Assistant Transforms Navigation

Maps now supports multi-step, natural-language commands that chain tasks in one flow. Example: “Find a budget-friendly restaurant with vegan options within a couple of miles along my route. What’s parking like and is it busy?” Then: “OK, let’s go there.” Gemini can also share ETAs, add Calendar events (e.g., “soccer practice tomorrow at 5 p.m.”), summarize news or sports during the drive, and process traffic reports from voice inputs like “I see an accident” or “Looks like there’s flooding ahead.” Rollout is in the coming weeks everywhere Gemini is available on Android and iOS.

Landmark-Based Guidance Enhances Real-World Orientation

Instead of distance-only prompts (“turn right in 500 ft”), Maps gives landmark directions such as “turn right after the Thai Siam Restaurant,” and visually highlights the place on the map. Gemini cross-references more than 250 million places with Street View imagery to show visible, relevant landmarks near turns. Availability: rolling out in the U.S. on Android and iOS, with broader regions expected later.

Proactive Traffic Alerts Arrive Before Disruptions

Maps now proactively warns of closures, accidents, and jams even when not actively navigating. Alerts can appear on top of other apps (e.g., a music player), giving drivers time to reroute. Availability: rolling out first in the U.S. on Android; additional platforms and regions will follow.

Lens in Maps Adds Conversational Local Discovery

Lens built with Gemini lets users raise the phone and identify nearby places (restaurants, cafés, landmarks), then ask, “What is this place and why is it popular?” or “What’s the vibe inside?” Gemini synthesizes live visuals, reviews, and Maps listings, and can answer specifics (e.g., “Do they have French butter croissants?”). Availability: U.S. later this month on Android and iOS, with gradual expansion.

Rollout Schedule and Ecosystem Integration

The Gemini upgrade begins November 2025 on Android and iOS; Android Auto support is “on the way.” The integration aligns Maps with Google’s broader push to standardize Gemini across consumer apps—linking Search, Assistant, Calendar, Photos, and Maps into a unified, multimodal layer for day-to-day tasks.

Why This Matters:

Navigation shifts from reactive GPS to an adaptive co-pilot that anticipates issues, understands context, references real-world landmarks, and executes cross-app tasks without touching the screen. This elevates safety, reduces friction in dense urban driving, and signals Google’s intent to make Gemini the common intelligence fabric across its services.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Simplify travel planning & find hidden gems with AI. Discover the top 10 AI travel planning tools for a personalized & unforgettable trip.

Read a comprehensive monthly roundup of the latest AI news!