Key Takeaway

Google’s Gemini 2.5 Flash Image introduces advanced features such as multi-image fusion, character consistency, style transfer, and real-world reasoning, while enabling creative storytelling sequences and high-fidelity edits. Integrated across the Gemini app, Google AI Studio, Vertex AI, and the Gemini API, it delivers professional-grade precision with strong privacy and safety guardrails, outperforming rivals in both accuracy and usability.

Google Launches Gemini 2.5 Flash Image – Key Points

Launch and Purpose

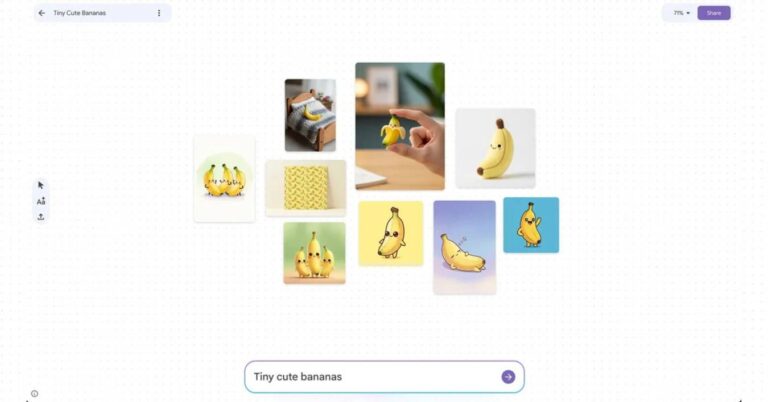

Google unveiled Gemini 2.5 Flash Image (nicknamed “nano-banana”) as the successor to Gemini 2.0 Flash, addressing requests for higher-quality images, better prompt handling, and stronger editing control. While 2.0 Flash was praised for affordability and low latency, it lacked fidelity and editing depth. Gemini 2.5 bridges those gaps with enterprise-ready tools and creative versatility. Some features roll out first on the Gemini mobile app, with broader parity for web and API coming shortly.

Superior Prompt Accuracy and Benchmarks

Gemini 2.5 Flash Image significantly improves text-to-image accuracy. Google reports it frequently outperforms OpenAI’s GPT-4o on prompt compliance and scored higher in human-rated ELO editing benchmarks, making it more reliable than many image-only models.

Multi-Image Fusion in Practice

The model blends up to three input images into realistic composites. The Register tests demonstrated its ability to create missing body parts and adjust clothing details, while AI Studio’s “Home Canvas” app allows users to drag products into new environments. DeepMind further highlights creative remixing of two or more images to generate entirely new visual scenes.

Character Consistency

Gemini maintains people, pets, or objects across multiple edits with consistent identity. The Co-Drawing demo app demonstrates continuity across outfits, lighting, poses, and even time periods. For instance, Gemini can reimagine a person “as a baker, sculptor, or teacher,” or show them across decades. This consistency makes it ideal for catalogs, branding, and serialized storytelling.

Targeted Transformations

Natural language instructions allow precise, localized edits. Examples include removing helmets, mirrors, or furniture, recoloring hair and clothing, or altering posture. Google’s PixShop template app showcases these edits with intuitive UI and prompt controls. Gemini’s outputs preserve fine textures, from clothing fabric to environmental lighting.

Style Transfer

Gemini 2.5 supports pattern and texture migration between objects, e.g., overlaying butterfly patterns on dresses or floral textures on boots. This makes it especially useful for design and fashion industries.

World Knowledge and Real-World Reasoning

Gemini extends beyond aesthetics, enabling reasoning-driven edits. Demonstrations include visualizing cause-and-effect scenarios (e.g., balloon drifting toward a cactus, then popping). This leverages Gemini’s semantic understanding for education, tutorials, and narrative design.

Sequential Storytelling

Unique to Gemini 2.5, the model can generate multi-image narratives. DeepMind demos include an 8-part 1960s music story, a 12-part noir detective sequence, and a 9-part superhero arc—all told visually without text. This opens applications for comics, brand campaigns, and visual storytelling.

Template Adherence

Standardized asset creation remains a strength: real estate cards, employee IDs, and product mockups can all be produced in uniform style. This ensures scalability across enterprise workflows.

Developer and User Ecosystem

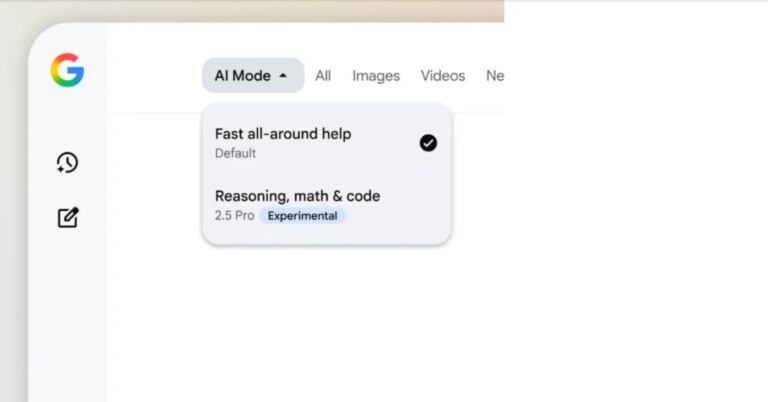

Gemini 2.5 Flash Image integrates across platforms:

Gemini app (select the “Flash” model in the chat bar).

Gemini API, Google AI Studio, and Vertex AI for enterprise deployment.

Apps can be prototyped and deployed directly from AI Studio or saved to GitHub.

Hands-on examples demonstrate quick prototyping (e.g., “build me an image editing app that lets users upload and apply filters”).

Pricing Model

Set at $30 per 1M output tokens, with each image ~1,290 tokens (~$0.039 per image), pricing remains unchanged from Gemini 2.0. This low cost undercuts legacy tools like Photoshop for many editing tasks.

Partnerships and Distribution

To broaden access, Google partnered with OpenRouter.ai (3M+ developers) and fal.ai (generative media platform). Gemini 2.5 Flash is notably the first image-generation model on OpenRouter, among 480+ hosted models.

Performance and Speed

Cloud processing ensures near-instant results. Reviewers noted edits complete in seconds even on older Pixel devices, making workflows dramatically faster than manual design tools.

Transparency and Watermarking

Gemini 2.5 includes dual watermarking: a visible label plus SynthID, DeepMind’s invisible watermark embedded in every output. This strengthens accountability and reduces risks of fake content.

Safety and Filtering

DeepMind emphasizes data filtering, red teaming, and labeling to minimize harmful or biased outputs. Guardrails block explicit pornography and flag sensitive content, though limitations exist (e.g., celebrities like Taylor Swift can still be generated). The system has been stress-tested on LMArena benchmarks under its “nano-banana” codename.

Future Development

Google continues to refine factual detail rendering, long-form text integration in images, and reliability of character consistency. Feedback channels remain open on forums and X (Twitter). A stable release is expected in the coming weeks (late 2025).

Why This Matters

Gemini 2.5 Flash Image represents a leap in creative control, combining technical precision with imaginative flexibility. From multi-image fusions and pattern transfers to serialized storytelling and reasoning-based edits, it offers a wide toolkit for developers, educators, and creatives. Dual watermarking, filtering, and red teaming reinforce safety and trust, positioning Google as both a technical leader and ethical standard-setter. Its broad rollout across mobile, web, and enterprise ecosystems sets the stage for AI-driven disruption of branding, education, design, and entertainment.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Meta Partners with Midjourney to integrate its AI image and video technology into future products, boosting its superintelligence strategy.

xAI’s new AI tool, Grok Imagine, allows users to create NSFW content with its “spicy mode,” raising concerns about deepfakes and content moderation.

Reve Image 1.0’s free 12B-parameter AI generates photorealistic, diverse art with flawless text, outperforming Flux and Midjourney v6.1.

Read a comprehensive monthly roundup of the latest AI news!