Midjourney’s V1 Video marks the company’s first AI-powered animation tool—transforming static images into 5-second animated clips, extendable to 21 seconds, for just $10/month. The V1 Video release is a major step in Midjourney’s roadmap toward real-time AI simulations, combining accessibility, performance, and creative empowerment. Ongoing legal scrutiny by Disney and Universal adds urgency to questions about AI-generated content governance.

Midjourney Launches V1 Video – Key Points

- Launch & Access

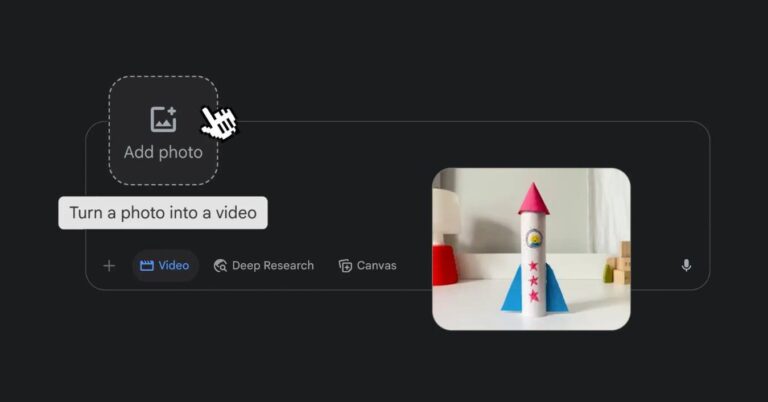

- Released on June 18, 2025, the V1 Video model is available via Midjourney’s web interface. While login via Discord is supported, the full animation functionality is web-only.

- Users can animate both Midjourney-generated images and user-uploaded assets by selecting the “Animate” function.

- Two core animation workflows are provided:

- Automatic Mode: Applies a generic motion prompt to create movement instantly.

- Manual Mode: Lets users define a specific motion behavior through custom text input.

- Offers Low-motion (for subtle movement) and High-motion (for full-scene dynamic motion) settings.

- Output duration: 5 seconds, extendable by 4 seconds per action, up to a maximum of 21 seconds.

- Users can drag and drop outside images and define them as “start frames” for a V1 Video animation.

- Subscription & Cost Structure

- The $10/month plan includes 3.3 hours of fast GPU time, roughly translating to 200 image generations.

- A single V1 Video job produces four 5-second clips and consumes compute equivalent to ~8× the cost of an image job.

- Midjourney estimates each second of video costs about one image worth of compute, making the V1 Video tool over 25× cheaper than typical video generators.

- Pricing is subject to adjustment based on server load and community usage. A Video Relax Mode for Pro-tier users is under evaluation to reduce costs for longer renders.

- Competitive pricing:

- OpenAI Sora: $20–$200/month

- Google Flow: $20–$249/month

- Adobe Firefly: From $9.99/month (20 videos)

- Runway Gen-4 Turbo: From $12/month

- Features & Output Quality

- The V1 Video model supports image-to-video only (text-to-video not yet supported).

- Resolution is 480p, aimed at rapid prototyping, social media, and concept visualization.

- The interface is optimized for accessibility, allowing creators without technical backgrounds to produce animated content.

- Midjourney confirms future updates will introduce higher resolutions, better motion refinement, and integration with ongoing image model improvements.

- Roadmap & Vision

- Midjourney’s V1 Video is framed as the second phase in a four-part plan:

- Image Models

- V1 Video Models

- 3D Models

- Real-Time Simulation Engines

- The goal: enable real-time, explorable AI-generated worlds where users control perspective, interaction, and motion.

- Midjourney expects that these systems will become affordable and usable for everyday users in the near future.

- Midjourney’s V1 Video is framed as the second phase in a four-part plan:

- Competitive Context

- The V1 Video model competes in an increasingly crowded market of AI video generators, including:

- OpenAI’s Sora

- Google’s Veo 3 and Flow

- Adobe’s Firefly Video

- Meta’s MovieGen

- Runway Gen-4 Turbo

- While rivals focus on text-to-video generation, cinematic realism, or enterprise-scale output, Midjourney aims to provide fast, fun, and budget-friendly tools for a wide creative audience.

- The V1 Video model competes in an increasingly crowded market of AI video generators, including:

- Legal & Ethical Considerations

- Disney and Universal sued Midjourney in June 2025, accusing the company of illegally training its models on copyrighted material.

- The complaint names the V1 Video rollout as a critical escalation of the alleged IP infringement.

- The lawsuit brands Midjourney as a “virtual vending machine” for unauthorized derivatives.

- No formal comment from Midjourney yet, though the company publicly urges responsible use of its platform.

- Broader ethical concerns include:

- Rise in deepfake content, such as the viral “emotional support kangaroo” incident.

- Federal criminalization of explicit AI-generated deepfakes in the U.S.

- Growing demand for transparency around training data and content provenance in AI tools.

- Disney and Universal sued Midjourney in June 2025, accusing the company of illegally training its models on copyrighted material.

Why This Matters

- Creative Access at Scale: V1 Video lowers the barrier for creators to animate images affordably, making animation tools available to individuals and teams of any size.

- Early Step Toward Immersive AI: The V1 Video model is a tangible step toward Midjourney’s broader plan for open-world, real-time interactive simulations.

- Market Disruption: Offering near-instant video generation at a fraction of competitors’ costs, V1 Video redefines pricing expectations in AI visual production.

- Regulatory Pressure Rises: Legal scrutiny around V1 Video highlights an inflection point in the governance of synthetic media tools, copyright frameworks, and content ethics.

Google’s Veo 3 can autonomously generate hyperreal AI video with audio and dialogue. Its capabilities are impressive—and controversial.

AI is revolutionizing filmmaking and content creation! This comprehensive guide compares the top 20 text-to-video tools, highlighting their strengths, and limitations

ByteDance’s Seaweed-7B enables real-time AI video with audio sync, cinematic control, and multi-shot storytelling using just 7B parameters.

Gen-4 delivers consistent, dynamic video generation with real-world physics and creative control, transforming content creation workflows.

Read a comprehensive monthly roundup of the latest AI news!