Key Takeaway:

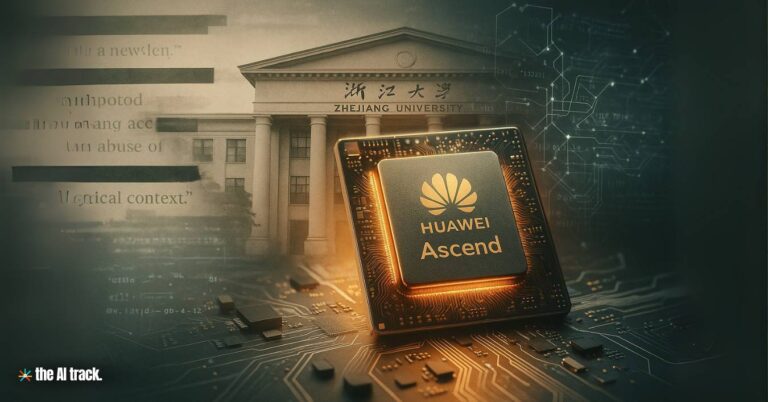

Huawei, in collaboration with Zhejiang University, unveiled DeepSeek-R1-Safe, a large language model trained on 1,000 Huawei Ascend AI chips. The model is designed to comply with China’s strict AI regulations, proving “nearly 100% successful” in blocking politically sensitive content while maintaining performance levels close to its predecessor.

DeepSeek-R1-Safe – Key Points

Development and Launch Ceremony

DeepSeek-R1-Safe was unveiled at Huawei Connect 2025 in Shanghai. The ceremony was attended by Chen Chun (Academician, Chinese Academy of Engineering), Zhang Dixuan (President, Huawei Ascend Computing Business), Ren Kui (Dean, Zhejiang University College of Computer Science and Technology), and Jiang Ming (Huawei Fellow, Computing Architecture and Design). Additional attendees included Zhejiang University faculty and Huawei research directors, underscoring a flagship university–industry collaboration. Huawei also outlined chip and computing power roadmaps alongside the release.

Regulatory Context in China

Chinese regulators require AI models to embody “socialist values” and avoid politically sensitive content. Chatbots such as Baidu’s Ernie Bot already refuse to answer political queries. DeepSeek-R1-Safe extends this framework with stronger filtering and policy-aligned safety tooling to facilitate compliant deployment in public-facing products and services.

Performance Metrics

Testing confirmed nearly 100% success in filtering 14 categories of harmful content (toxic speech, politically sensitive subjects, incitement to illegal activity, etc.). Against advanced jailbreak techniques (contextual role-play, scenario-based manipulation, and encrypted encoding) the model achieved >40% defense success rate, indicating robustness while highlighting remaining gaps against sophisticated circumvention.

Security Defense Benchmarking

The model demonstrated an 83% overall safety defense capability, outperforming peers such as Alibaba’s Qwen-235B and DeepSeek-R1-671B by 8–15% under identical conditions. On general benchmarks (MMLU, GSM8K, CEVAL), DeepSeek-R1-Safe showed <1% degradation versus DeepSeek-R1, preserving utility while strengthening safeguards.

Industry Context and Market Impact

Earlier launches of DeepSeek R1 and V3 rattled global markets, contributing to a January 2025 selloff in Western AI stocks. DeepSeek-R1-Safe reinforces Huawei’s strategy to lead the domestic AI market with politically compliant, high-performing models. The announcement coincided with Huawei’s next-generation Ascend AI hardware and a comprehensive open-source software strategy (compilers, runtime drivers, ecosystem tooling).

Research and Strategic Vision

Professor Ren Kui detailed a secure, end-to-end post-training framework spanning curated safety corpora, balanced safety training, and an integrated hardware–software stack. The team reported China’s first secure training of a trillion-parameter model on a thousand-card cluster, plus tooling for synchronized dependencies, shared data/weights, and collaborative training/inference. The model is fully open-sourced on ModelZoo, GitCode, GitHub, Gitee, and ModelScope, enabling broad academic/industrial adoption.

Academic and Ecosystem Contributions

Chen Chun framed the release as a benchmark for safe, trustworthy AI, aligning with the State Key Laboratory of Blockchain and Data Security (approved November 2022) and China’s goals for high-level tech self-reliance. The initiative emphasizes an ecosystem of independent innovation and deeper Huawei–university collaboration.

International Reaction and Strategic Implications

Coverage noted global concerns about adapting open AI models to politically controlled contexts, with implications for international cooperation, investment, and standards. Huawei highlights that compliance does not materially impair day-to-day functionality, presenting DeepSeek-R1-Safe as a template other Chinese AI systems can follow to strengthen domestic autonomy while addressing sensitive-content handling requirements.

Why This Matters:

DeepSeek-R1-Safe sets a reference point for policy-aligned AI safety at scale, pairing Huawei’s hardware/ecosystem maturity with Zhejiang University’s research depth. By outperforming domestic peers and retaining performance on standard tasks, the model advances censorship-compliant, production-ready AI in China. The open-source release, hardware roadmaps, and software strategy signal ambitions to shape local and global AI ecosystems while preserving state-aligned content controls.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Huawei’s Atlas 950/960 SuperPoDs and yearly Ascend chip upgrades aim to build giant, China-made AI clusters, challenging Nvidia and reshaping compute supply.

Read a comprehensive monthly roundup of the latest AI news!