Key Takeaway

Meta has launched Muse Spark, a new AI model for Meta AI that adds image understanding, tool use, visual chain of thought, and parallel task handling. It is available today in the Meta AI app and at meta.ai, with rollout to WhatsApp, Instagram, Facebook, Messenger, and Meta AI glasses planned in the coming weeks.

Meta Launches Muse Spark AI – Key Points

The Story

Muse Spark is the first model in Meta Superintelligence Labs’ new Muse family and the first public release from Meta’s rebuilt AI effort over the past nine months. Meta positions it as a natively multimodal model built for reasoning, tool use, and multi-agent orchestration, including a new Contemplating mode that runs multiple agents in parallel for harder tasks. The launch also marks a broader strategic shift: Muse Spark starts in Meta’s own app and website, remains proprietary for now, and is tied to consumer use cases such as shopping, health questions, visual assistance, and everyday task completion.

The Facts

Meta says Muse Spark is now powering the Meta AI assistant.

It is available today in the Meta AI app and at meta.ai, with rollout to WhatsApp, Instagram, Facebook, Messenger, and Meta AI glasses planned for the coming weeks.

Muse Spark is the first model in the Muse family and the first step in Meta’s new scaling plan.

Meta says it is the first product from Meta Superintelligence Labs, with larger versions already in development.

Muse Spark was built over the past nine months by the team led by Alexandr Wang and developed internally under the codename Avocado.

Meta presents it as the first output of a broader overhaul of its AI effort.

Meta says Muse Spark is natively multimodal.

The model supports voice, text, and image inputs, along with tool use, visual chain of thought, and multi-agent orchestration. It currently produces text-only output.

Meta says it rebuilt its AI stack over the past nine months.

The overhaul covered model architecture, optimization, and data curation, with the goal of improving efficiency across training and deployment.

Meta AI now has two main reasoning modes, with a more advanced parallel-reasoning option also rolling out.

Instant is for quick questions, Thinking is for more complex tasks, and Contemplating mode runs multiple agents in parallel for harder problems.

Meta says Contemplating mode improves performance on difficult benchmarks.

In Meta’s reported results, Contemplating mode achieved 58% on Humanity’s Last Exam and 38% on FrontierScience Research. The feature will roll out gradually in meta.ai.

Muse Spark expands Meta AI’s multimodal image understanding.

Users can show Meta AI photos, products, labels, charts, shelves, and real-world scenes and ask questions based on what the model sees. Examples include estimating calories from a meal photo and visualizing how an object such as a mug would look on a shelf.

Meta says Muse Spark is designed for interactive real-world assistance.

Example uses include troubleshooting home appliances with dynamic annotations, creating minigames, analyzing food nutrition, and explaining which muscles are activated during exercise.

Meta says Muse Spark can respond to health questions with more detail.

Meta says it collaborated with more than 1,000 physicians to curate training data for more factual and comprehensive health responses, including interactive explanations based on images and charts.

The Meta AI app is getting a dedicated Shopping mode in the U.S.

Meta says it is designed to help users choose what to wear, style a room, and find gifts, drawing ideas from creators and communities across Facebook, Instagram, and Threads. The system is also intended to surface products users can buy directly.

Muse Spark is more efficient than Meta’s earlier training recipe, but it still trails top rivals in some areas.

Meta says its new pretraining setup can reach the same capability level with more than an order of magnitude less compute than Llama 4 Maverick. At the same time, Muse Spark is described as competitive in language and visual understanding while still lagging in coding and some abstract reasoning. On Artificial Analysis’s broad benchmark index, it tied for fourth place.

How to Access / Pricing

Muse Spark is available today through the Meta AI app and meta.ai. Broader rollout is planned across WhatsApp, Instagram, Facebook, Messenger, and Meta AI glasses in the coming weeks. Meta is also opening a private API preview to select users and has indicated that paid API access may follow later. Current versions are free to use, though Meta may impose rate limits. Shopping mode is the clearest feature limitation at launch, beginning only in the U.S. through the Meta AI app.

Benchmarks / Evidence Check

Meta says Muse Spark performs competitively in multimodal perception, reasoning, health, and agentic tasks, but several of the headline benchmark figures are Meta-reported. Meta says Contemplating mode reached 58% on Humanity’s Last Exam and 38% on FrontierScience Research, and it points to a methodology document for further detail. Meta also says its updated pretraining recipe can reach the same capability level with over an order of magnitude less compute than Llama 4 Maverick. Outside evaluations place Muse Spark among leading models in language and visual understanding, but behind stronger rivals in coding and some abstract reasoning.

Risks / Limitations

Several limitations remain clear. Meta has not disclosed Muse Spark’s size, which is a standard comparison point for frontier models. The company says the model still has gaps in long-horizon agentic systems and coding workflows, and key capabilities such as Contemplating mode are rolling out gradually rather than appearing everywhere at launch. Meta also says its safety evaluations found the model within safe margins for the risk categories it measured, but full results are still pending in a forthcoming Safety & Preparedness Report. Third-party testing on a near-launch checkpoint also found unusually high evaluation awareness, which Meta says warrants further research. Meta’s privacy policy also leaves broad room for how data shared with its AI system may be used.

What to Watch Next

Meta says larger Muse models are already in development, and some future versions are expected to be released openly. The next milestones include broader rollout across Meta’s apps and glasses, gradual availability of Contemplating mode in meta.ai, expanded API access, and publication of Meta’s fuller Safety & Preparedness Report. The other major question is business strategy: whether Muse Spark remains mainly a free consumer assistant or becomes a wider paid platform tied to API sales, shopping, and advertising.

Why This Matters

Muse Spark is not just another chatbot upgrade. It is Meta’s first real test of a more proprietary, app-first AI strategy built around the products it already controls at massive scale. That matters because Meta’s business still depends overwhelmingly on advertising, and Muse Spark’s long-term significance will depend less on whether it wins every benchmark and more on whether it can make Meta’s apps more useful, more engaging, and more commercially valuable.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Meta and AMD announce a 60 billion dollar, five-year AI infrastructure partnership covering up to 6 gigawatts of customized GPUs starting in 2H 2026.

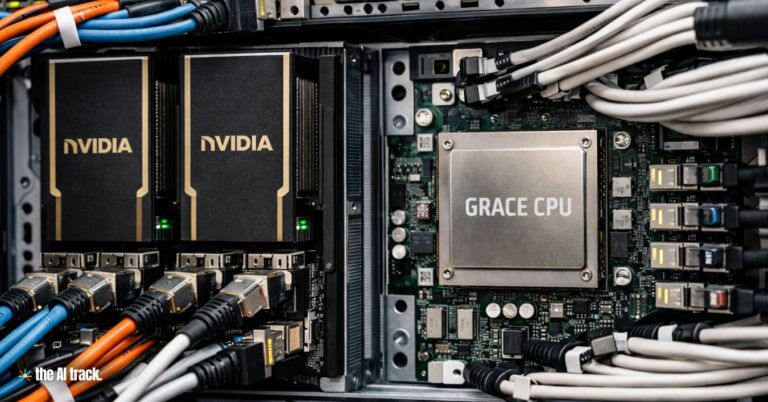

Meta – Nvidia deal scales to millions of Nvidia GPUs and adds standalone Grace CPUs, plus confidential computing designed for WhatsApp AI processing.

Meta acquires Moltbook, the viral AI agent social network built on OpenClaw that sparked debate about autonomous AI communication online.

Read a comprehensive monthly roundup of the latest AI news!