Key Takeaway

Anthropic has introduced “dreaming,” a Claude Managed Agents capability that lets AI agents review past sessions, extract patterns, and update files that store preferences, context, and reusable playbooks. The feature is designed to help agents improve over time without changing the underlying model weights.

Anthropic Introduces Dreaming – Key Points

The Story

Anthropic unveiled dreaming at its Code with Claude developer event in San Francisco, alongside public beta releases for outcomes and multi-agent orchestration. The three features are built into Claude Managed Agents and target common enterprise problems with AI agents: accuracy, learning, verification, and complex task execution. Early users reported gains including a roughly 6x increase in task completion for Harvey, a 50% reduction in document review time for Wisedocs, and large-scale parallel build-log processing at Netflix.

The Facts

Dreaming is available in research preview.

It reviews an agent’s past sessions and memory stores, identifies patterns, and writes learnings that future sessions can use.

The feature is different from standard memory.

Claude memory retains preferences and context across sessions, while dreaming operates at a higher level by finding recurring mistakes, shared workflows, and useful patterns across multiple sessions.

Dreaming does not update model weights.

The process writes plain-text notes, updates context files, and creates structured playbooks rather than changing the underlying model itself.

The process is designed to be inspectable.

The memories, notes, and playbooks created through dreaming can be reviewed by humans, making the improvement process more observable.

Anthropic demonstrated dreaming with a fictional lunar drone mission.

A multi-agent system used a commander agent, detector agent, and navigator agent to test drone landings across simulated moon landing sites.

The demo showed improvement after a dreaming session.

After reviewing past simulation runs, the dreaming agent produced a descent playbook that improved performance on previously weaker landing sites.

Outcomes is now in public beta.

The feature lets developers define success criteria through rubrics, then uses a separate grader agent to evaluate outputs and guide revisions.

The grader agent runs in a fresh context window.

Separating the working agent from the grader helps reduce the influence of accumulated session bias or long-thread degradation.

Multi-agent orchestration is also in public beta.

It lets a lead agent split complex work into subtasks and delegate them to specialist agents with their own models, prompts, tools, and context windows.

Multi-agent systems are positioned for investigation-heavy work.

The approach is useful when many context-heavy search or analysis paths can be explored separately, with only the final useful result returned to the main thread.

Claude Code is getting higher usage limits.

Anthropic is doubling Claude Code rate limits for paid plans, removing peak-hour usage caps for Pro and Max accounts, and sharply increasing request volume for Claude Opus models.

Anthropic’s platform usage is rising sharply.

Annualized revenue and usage grew 80x in the first quarter of 2026, compared with an internal plan for 10x annual growth. Claude platform API volume is up nearly 70x year over year, and the average Claude Code developer now spends 20 hours per week using the tool.

Numbers that Matter

- 80x: Anthropic’s reported annualized revenue and usage growth in Q1 2026.

- 20 hours per week: Average time Claude Code developers spend using the tool.

- 220,000+ Nvidia processors: Hardware capacity at SpaceX’s Colossus 1 facility.

- 300 megawatts: New compute capacity Anthropic expects from the SpaceX deal within a month.

- 23,000 engineers: Mercado Libre engineers running Claude Code.

- 500,000+ pull requests: Pull requests reviewed by Mercado Libre with human oversight.

Risks / Limitations

Dreaming still requires trust in an agent’s ability to summarize and preserve useful lessons from its own history. The outputs are inspectable, but the system still depends on the quality of the agent’s pattern extraction, memory curation, and future use of those notes. The outcomes feature also relies on model-based grading, although the grader runs in a fresh context window to improve verification quality.

Background / Context

Claude Managed Agents launched in public beta on April 8 as a platform layer for building agentic applications with memory, tool integration, and action handling. Teams using Managed Agents have shipped 10x faster than teams building their own agent infrastructure from scratch. The new features extend that platform toward longer-running, more autonomous agent workflows.

Anthropic is also expanding compute availability through a deal to use the full computing power of SpaceX’s Colossus 1 facility in Memphis, Tennessee. The company is interested in working with SpaceX on multiple gigawatts of space-based orbital data centers.

What to Watch Next

The main test is whether dreaming can improve reliability in real production environments beyond early adopter examples and staged demos. Anthropic is also using increased compute capacity to raise Claude Code and Claude Opus limits as demand grows around AI coding and enterprise agent workflows.

Why This Matters

AI agents are moving from single-session assistants toward systems that can evaluate work, coordinate multiple specialists, reuse lessons from previous attempts, and run scheduled routines. For developers and enterprises, Anthropic’s new features focus less on raw model intelligence and more on reliability, auditability, compute availability, and repeatable performance in production workflows.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

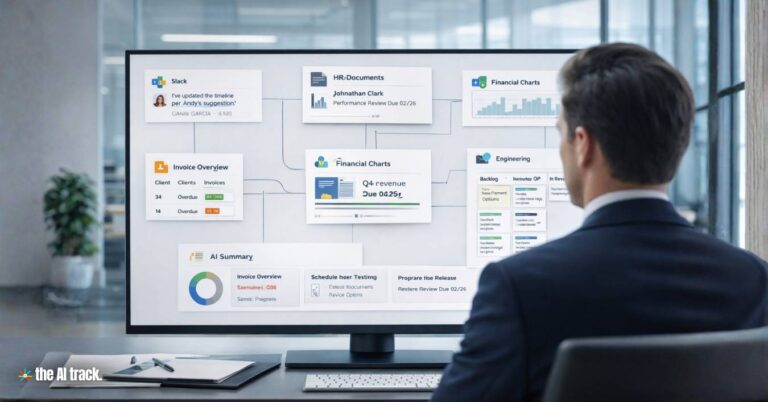

Anthropic expands Enterprise AI Agents across finance, HR, and engineering as software stocks rebound and SaaS disruption fears ease.

Anthropic says Mythos Preview can exploit critical vulnerabilities and remains withheld from public release over cyber and safety risks.

Anthropic launched Claude Design in research preview for paid users, linking prompts, prototypes, Canva exports, and Claude Code workflows.

Claude connectors bring Anthropic’s AI assistant into Adobe, Blender, Ableton, Autodesk Fusion, Splice, SketchUp and more creative workflows.

Read a comprehensive monthly roundup of the latest AI news!