Key Takeaway

The EU has reached a provisional deal on EU AI Act simplification, delaying some high-risk AI obligations from August 2026 to December 2027 while adding a specific ban on AI-generated non-consensual sexually explicit content and child sexual abuse material.

EU AI Act Simplification Deal – Key Points

The Story

EU member states and the European Parliament have reached a provisional deal on EU AI Act simplification as part of the Digital Omnibus package. The agreement delays some obligations for high-risk AI systems, narrows overlap with sector-specific safety rules, introduces simpler compliance measures for businesses, and gives AI developers access to an EU-level testing sandbox. It also bans AI systems used to create non-consensual sexually explicit deepfakes, including so-called nudification apps. The agreement still needs formal approval from the European Parliament and EU governments.

The Facts

The deal is provisional

EU member states and European Parliament negotiators have reached a tentative agreement, but the changes still require formal approval from the Parliament’s plenary session and EU governments.

The changes are part of the Digital Omnibus on AI

The package is part of the European Commission’s effort to simplify digital regulation, reduce bureaucracy, and streamline compliance with the EU AI Act.

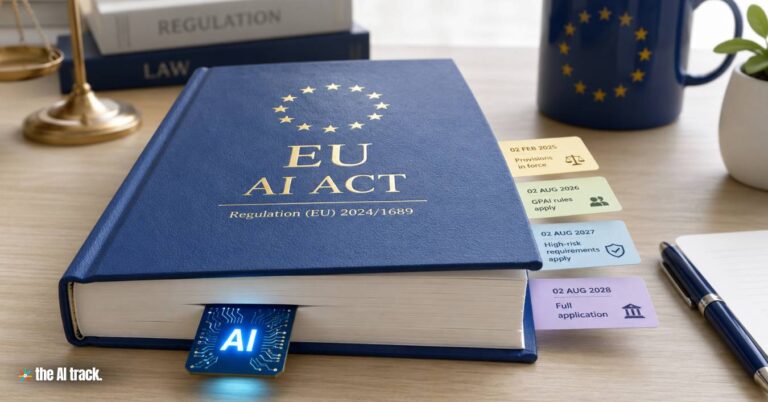

The EU AI Act is already in force

The AI Act entered into force in August 2024, with key provisions scheduled to apply gradually over time.

Some high-risk AI obligations are being delayed

Rules for high-risk AI systems will be postponed from August 2, 2026, to December 2, 2027.

High-risk categories include sensitive sectors

The affected high-risk systems include AI involving biometrics, critical infrastructure, education, employment, law enforcement, migration, asylum, and border management.

Machinery is being excluded from duplicate AI Act obligations

Machinery-related AI will be removed from overlapping AI Act obligations where it is already covered by sectoral safety rules.

Product-based AI systems get a longer deadline

AI used in products such as lifts or toys will have until August 2, 2028, to comply.

The agreement aims to reduce administrative burden

Marilena Raouna, Cyprus’s Deputy Minister for European Affairs, described the agreement as a way to reduce recurring administrative costs, support legal certainty, and harmonize implementation across the EU.

Business pressure shaped the debate

Earlier pressure for delays came from U.S. tech companies, European industrial groups, and an open letter signed by 46 European companies, including Airbus, Lufthansa, and Mercedes-Benz, urging a pause to support reasonable implementation and competitiveness.

AI developers will get access to an EU-level sandbox

Companies developing AI systems will be able to test products in a European-level sandbox before bringing them to market.

The package bans AI nudification apps and explicit deepfakes

The Digital Omnibus prohibits AI applications used to create unauthorized sexually explicit deepfakes, including tools that digitally remove people’s clothing.

The ban covers child sexual abuse material and multiple formats

The rules apply to explicit images, videos, or audio created without consent, and are explicitly intended to cover material depicting child sexual abuse.

Mandatory watermarking is included

Companies will have until December 2, 2026, to align their systems with the new rules and introduce mandatory watermarking of AI-generated content.

Timeline / What Changed

The AI Act entered into force in August 2024, but its obligations are being phased in. The latest EU AI Act simplification deal postpones certain high-risk AI rules from August 2, 2026, to December 2, 2027, keeps a later August 2, 2028 deadline for AI used in products such as lifts or toys, removes machinery from duplicative AI Act obligations, and introduces a December 2, 2026 deadline for watermarking and compliance with the ban on non-consensual explicit AI-generated content.

Background / Context

The AI Act is part of a broader EU digital regulation framework that also includes the Digital Services Act. Together, these rules focus on transparency, fundamental rights, systemic risk management, and accountability for online platforms and AI systems. The Digital Omnibus reflects a wider EU simplification push aimed at reducing regulatory complexity while preserving the bloc’s AI oversight framework.

Risks / Limitations

The legislation has drawn criticism from those who argue that simplifying compliance could weaken the EU’s AI rulebook or reflect pressure from businesses. EU lawmakers involved in the deal argue that the package does not dilute safety rules, but clarifies how companies should comply when AI regulation overlaps with sector-specific legislation.

What to Watch Next

The next step is formal approval by the European Parliament’s plenary session and EU governments. The key dates to watch are December 2, 2026 for companies to comply with the new rules on AI-generated content and watermarking, December 2, 2027 for postponed high-risk AI obligations, and August 2, 2028 for AI used in products such as lifts or toys.

Why This Matters

The EU AI Act simplification deal shows the EU trying to balance two goals: keeping the AI Act’s risk-based safety framework while reducing regulatory friction for businesses. For users, the most immediate consumer-facing change is the explicit ban on AI systems that generate non-consensual sexually explicit content and child sexual abuse material, while companies gain more time and clearer pathways to comply with the EU’s AI rules.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Microsoft, Google DeepMind and xAI will join U.S. AI model testing for national security risks before advanced systems are released.

The European Union is advancing towards implementing the AI Act, one of the first major global laws controlling AI use.

AI is transforming the legal profession by automating tasks, enhancing efficiency, creating new roles. Everything you need to know

Elon Musk testified as the OpenAI trial began over charitable trust claims, Microsoft ties and the company’s commercial structure.

Read a comprehensive monthly roundup of the latest AI news!