Key Takeaway

OpenAI has released ChatGPT Images 2.0, an updated image model designed to produce more accurate, structured, multilingual, and consistent visuals. The key change is a reasoning step before generation, helping the system interpret complex prompts, uploaded files, layouts, real-time information, and multi-image requests more deliberately.

ChatGPT Images 2.0 – Key Points

The Story

OpenAI launched ChatGPT Images 2.0 on April 21 as a major update to ChatGPT’s image generator, following GPT-Image-1.5, which was released in December 2025. The model is designed to improve text inside images, structured layouts, multilingual typography, visual consistency, maps, slides, infographics, iconography, dense compositions, aspect ratios, and complex prompt interpretation. OpenAI CEO Sam Altman described the update as “a huge step forward” during a livestream announcement, comparing the leap to “going from GPT-3 to GPT-5 all at once.”

The Facts

ChatGPT Images 2.0 has been released by OpenAI.

The update is presented as a major improvement to ChatGPT’s image generation system, focused on moving from quick prompt interpretation to more deliberate visual construction.

The model adds a reasoning step before image creation.

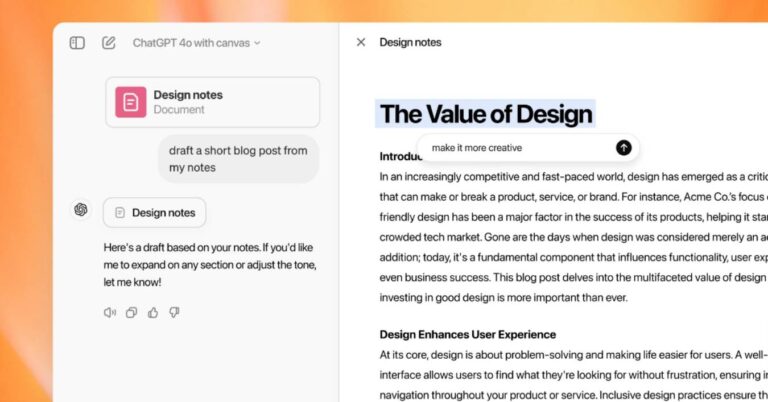

ChatGPT Images 2.0 can work through a prompt before producing the final output, breaking a request into parts, planning the structure, searching for real-time information, double-checking outputs, and deciding how those parts should fit together.

The update includes the

gpt-image-2model for API users.ChatGPT Images 2.0 includes the new

gpt-image-2model for developers and “Thinking” features for ChatGPT subscribers.Text rendering is one of the main improvements.

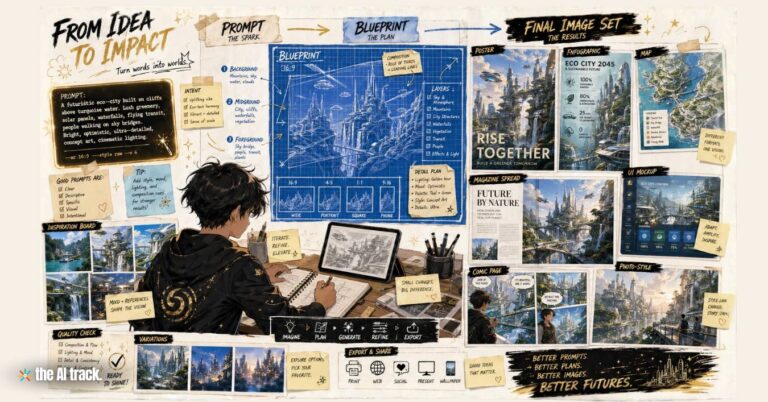

The update targets a long-standing weakness in AI image generation: legible text in posters, menus, slides, scientific diagrams, infographics, small text, iconography, handwritten notes, and dense compositions.

The model supports stronger multilingual text generation.

ChatGPT Images 2.0 is described as improving non-Latin script rendering, with support cited for Japanese, Korean, Chinese, Hindi, Bengali, Arabic, Cyrillic, Greek, Devanagari, and other global scripts.

Structured layouts and aspect ratios are expected to improve.

When users ask for specific visual elements in specific positions, the model is more likely to follow the prompt as an instruction set. OpenAI also lists broader aspect ratio support, from 3:1 to 1:3.

The model can work with uploaded materials.

In a press briefing example, OpenAI Product Lead Adele Li demonstrated the model using a PowerPoint file to synthesize core data, identify logos, and generate a professional poster aligned with the uploaded material’s style.

Visual consistency is stronger across related images.

Multiple images generated from the same idea are described as more likely to keep a recognizable character, shared style, object continuity, fine detail, or coherent visual direction.

The model can generate up to eight distinct images from one prompt.

The feature is intended to support storyboards, manga sequences, children’s books, brand campaigns, social media graphics, character sheets, and multi-scene visual concepts.

The system may take longer to generate images.

The reasoning step can slow the process, especially in Thinking or Pro modes. Depending on the prompt, the process may take a few minutes.

OpenAI is pushing image generation closer to text-model behavior.

The update reflects a broader move toward AI systems that reason across writing and visuals using the same underlying understanding.

What Is New

- Reasoning before generation: ChatGPT Images 2.0 can interpret a visual request before committing to an output, rather than generating from a single reactive pass.

- Better instruction-following for visual structure: The model is described as more capable of handling layouts, specific element placement, uploaded files, real-time information, dense compositions, and multi-layered prompts.

- Improved text inside images: The update targets warped letters, drifting spacing, lost meaning, and poor small-text rendering in generated visual text.

- Expanded multilingual typography: The model is described as better at rendering non-Latin scripts in educational, editorial, commercial, and informational visuals.

- Multi-image continuity: Users can generate up to eight related images from one prompt while maintaining character, object, and style consistency.

How to Access / Pricing

ChatGPT Images 2.0 is available to all ChatGPT, Codex, and API users. Free users are limited to two to three free images per day, while OpenAI says the software works better with Plus or Pro subscriptions.

Plus and Pro users can access more advanced Thinking capabilities, while Pro users receive additional access to ImageGen Pro models.

For developers, the gpt-image-2 API is in beta and supports flexible aspect ratios from 3:1 to 1:3, with resolution support up to 4K.

Use Cases

- Posters, menus, and magazine-style layouts: Better text rendering could make the tool more useful for designs where words must be readable.

- Slides, maps, and infographics: Improved layout control may help users create clearer presentation-style, educational, academic, and data-rich visuals.

- User interface mockups and screenshot transformations: Early examples include analyzing a website screenshot and recreating it in dark mode with clear text and matching imagery.

- Character and style consistency: Users creating a sequence of related visuals may benefit from more stable characters, object continuity, turnaround poses, and visual identity.

- Narrative image sequences: The reasoning step and multi-image generation may help with storyboards, manga, children’s books, comics, and campaign assets.

- Document-to-visual workflows: The model can use uploaded materials as context for posters, explainers, structured visual summaries, product grids, and branded creative assets.

Why This Matters

ChatGPT Images 2.0 points to a more practical phase of AI image generation: fewer broken layouts, more legible text, stronger multilingual support, broader aspect ratios, and better handling of complex creative instructions. For everyday users and professional teams, the important shift is not just prettier images, but less friction when turning detailed ideas, documents, real-time information, and multi-part concepts into usable visuals.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Microsoft unveiled MAI AI Models for transcription, voice generation, and image creation as it pushed further toward in-house AI self-sufficiency.

Meta launched Muse Spark AI with multimodal reasoning, image understanding, and Contemplating mode in Meta AI and meta.ai.

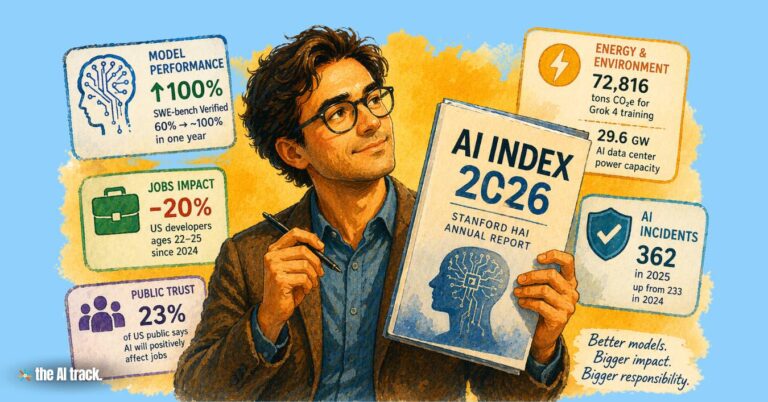

Stanford AI Index 2026 finds rapid AI gains, rising incidents, job disruption, weaker transparency, and a widening expert-public trust gap.

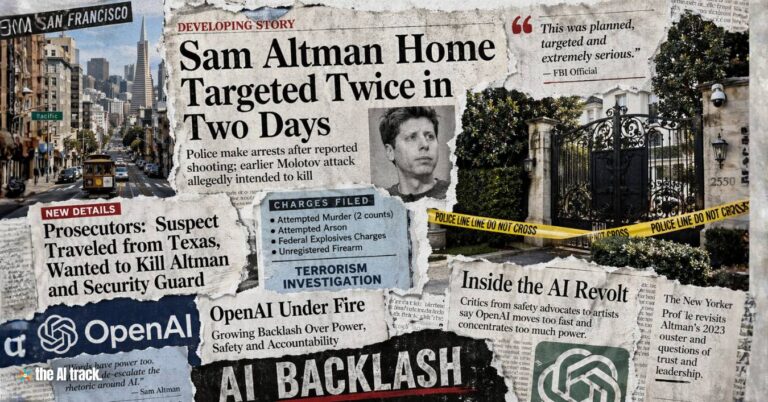

The attack on Sam Altman unfolded across two incidents in one weekend, with prosecutors alleging a targeted attempt to kill the OpenAI CEO.

Read a comprehensive monthly roundup of the latest AI news!