Key Takeaway

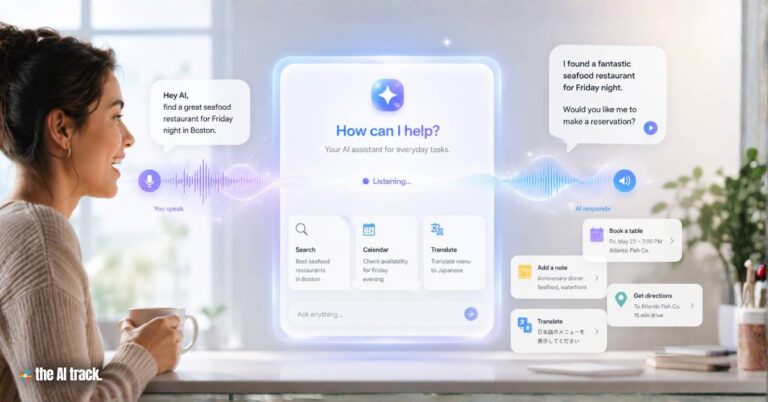

OpenAI has released three new voice API models: GPT-Realtime-2 for live voice agents, GPT-Realtime-Translate for live translation, and GPT-Realtime-Whisper for streaming transcription. The launch gives developers realtime audio tools for voice interfaces that can reason, translate, transcribe, use tools, and respond while a conversation unfolds.

GPT-Realtime-2 – Key Points

The Story

OpenAI released GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper in its API on May 7, 2026. GPT-Realtime-2 is designed for live audio conversations, with audio input, audio output, reasoning, tool use, recovery, and tone control handled inside one realtime model. GPT-Realtime-Translate supports more than 70 input languages and 13 output languages, while GPT-Realtime-Whisper is built for low-latency streaming speech-to-text transcription. The launch targets developers building voice agents, live translation tools, customer support systems, captions, meeting notes, classroom transcription, travel assistants, and other audio-first AI products.

The Facts

GPT-Realtime-2 brings GPT-5-class reasoning to live voice.

The model is built for live voice interactions where an AI agent can keep a conversation moving while reasoning through requests, calling tools, handling corrections or interruptions, and responding in a way that fits the moment.

The model supports a larger context window.

GPT-Realtime-2 has a 128K context window, up from 32K, giving it more room to track longer sessions and multi-step tasks without relying as heavily on external state management.

Reasoning effort can be adjusted.

Developers can choose between minimal, low, medium, high, and xhigh reasoning effort. Low is the default setting, intended to balance lower latency with enough reasoning for common voice interactions.

The model can speak during tool use.

Preambles allow an agent to say phrases such as “let me check that” or “checking your calendar” before a main response, reducing silent gaps in live conversations.

Parallel tool calls and tool transparency are supported.

GPT-Realtime-2 can trigger multiple backend requests at the same time and make those actions audible, such as saying it is checking a calendar, looking something up, or handling a booking request.

Failure recovery is built into the model behaviour.

The model can recover more gracefully by surfacing problems, such as saying it is having trouble with a request, instead of failing silently or breaking the conversation.

Tone and delivery can be adjusted deliberately.

GPT-Realtime-2 can shift speaking style, such as sounding calmer while resolving an issue, more empathetic when a user is frustrated, or more upbeat when confirming a successful action.

Benchmark results show gains over GPT-Realtime-1.5.

In OpenAI’s reported results, GPT-Realtime-2 reached 96.6% accuracy on Big Bench Audio at high reasoning effort, compared with 81.4% for GPT-Realtime-1.5. At xhigh effort, it reached a 48.5% average pass rate on Audio MultiChallenge, compared with 34.7% for the prior version.

Customer benchmarks show larger reported gains.

Zillow’s testing showed a call-success-rate increase from 69% to 95% on its hardest adversarial benchmark after prompt optimization, with stronger robustness on Fair Housing compliance.

The translation model targets multilingual voice applications.

GPT-Realtime-Translate supports more than 70 input languages and 13 output languages. It is designed for live multilingual voice experiences where users can speak in their preferred language and hear translated speech in real time, including customer support, cross-border sales, education, events, and creator platforms.

The streaming Whisper model targets live transcription workflows.

GPT-Realtime-Whisper transcribes speech as people talk, supporting use cases such as live captions, meeting notes, classroom transcription, broadcasts, voice agents, customer support, healthcare, sales calls, recruiting workflows, and faster follow-up systems.

Pricing is usage-based.

GPT-Realtime-2 costs $32 per million audio-input tokens, $0.40 per million cached input tokens, and $64 per million audio-output tokens. GPT-Realtime-Translate costs $0.034 per minute, and GPT-Realtime-Whisper costs $0.017 per minute.

How to Access / Pricing

The models are available through OpenAI’s Realtime API. Developers can test them in the Playground and start building through SDK calls or Codex-assisted setup.

GPT-Realtime-2 is priced at $32 per million audio-input tokens, $0.40 per million cached input tokens, and $64 per million audio-output tokens. GPT-Realtime-Translate is priced at $0.034 per minute. GPT-Realtime-Whisper is priced at $0.017 per minute.

Market Timing

The release comes as enterprise voice AI stacks are moving from stitched-together systems toward integrated models. OpenAI frames voice AI around three patterns: voice-to-action, where users speak requests and systems complete tasks; systems-to-voice, where software turns context into spoken guidance; and voice-to-voice, where AI helps conversations continue across languages or changing context. Early use cases include Zillow-style home search and tour scheduling, Priceline-style trip management, Vimeo-style live product education translation, and Deutsche Telekom-style multilingual customer support.

Why This Matters

Voice AI is moving from demos toward production workloads. By combining live audio, reasoning, tool use, translation, and transcription into API models with aggressive pricing, OpenAI is making it easier for developers to build voice agents without stitching together multiple vendors. The result could reshape how companies build customer support, booking, translation, education, media, captions, meeting tools, travel assistants, and enterprise assistant systems.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

OpenAI launched Workspace Agents in ChatGPT for team workflow automation, with Slack, Salesforce, scheduling, memory and Codex execution.

OpenAI releases ChatGPT Images 2.0 with reasoning, multilingual text, web search, broader aspect ratios, and multi-image output.

OpenAI is rolling out GPT-5.5 Instant as ChatGPT’s default model, with better accuracy, low latency and memory-source controls.

OpenAI is rolling out GPT-5.5-Cyber to vetted defenders for vulnerability triage, patching, penetration testing, and malware analysis.

Read a comprehensive monthly roundup of the latest AI news!