xAI’s new image and video generation tool, Grok Imagine, has been launched with a controversial “spicy mode” that allows for the creation of sexually suggestive content, including partial nudity.

This move, framed by Elon Musk as creating an “AI Vine,” is consistent with Grok’s established positioning as a boundary-pushing AI and has sparked significant debate regarding the platform’s content moderation policies and the potential for misuse, particularly in the creation of deepfakes, setting it apart from more restrictive competitors like OpenAI and Google.

xAI Launches Grok Imagine – Key Points

- Launch and Availability: xAI, founded by Elon Musk, has launched Grok Imagine, an image and video generation tool. It is available to subscribers of the annual $300 SuperGrok plan or the $84 annual Premium+ plan on X. The full-featured tool is on the iOS app, while the Android app currently has a limited early access version for image generation only.

- “Spicy Mode” and NSFW Content: Following the release of a flirtatious anime AI companion named Ani in July 2025, the tool includes a “spicy mode” that enables the generation of sexually suggestive content and partial nudity. Users can create 15-second videos with native audio from images. Despite some content moderation measures that blur or block overtly explicit results, the feature has been criticized for its permissiveness. The user interface is noted as seamless and intuitive, with a feature that auto-generates new images as the user scrolls. Users have already generated over 34 million images since the tool’s launch.

- Deepfake and Content Concerns: The tool has been shown to be capable of producing sexual deepfakes of public figures. This raises significant concerns, especially in light of Grok’s past issues, such as spewing antisemitic and misogynistic content in July 2025, and a previous controversy on the X platform involving AI-generated deepfakes of Taylor Swift in January 2024. While some restrictions on generating images of celebrities appear to be in place—for example, a

TechCrunchtest to generate an image of a pregnant Donald Trump failed—it is unclear how effective the guardrails are for the “Spicy” mode, particularly with images of real women. The existence of the mode was first revealed in a now-deleted post on X by xAI employee Mati Roy. - Comparative Analysis: Grok Imagine’s approach to content moderation is a stark contrast to competitors like OpenAI’s Sora and Google’s Veo 3. xAI is also competing with image generators like Runway, Midjourney, and Leonardo, as well as chatbots like Claude and DeepSeek. Unlike some rivals, Grok Imagine won’t generate video from text descriptions directly, requiring an image as a starting point. While the tool is impressive, early outputs of humans are described as being in the “uncanny valley,” with waxy-looking skin. The xAI Acceptable Use Policy remains notably shorter (less than 350 words) than its competitors’, placing the responsibility of appropriate use on the user.

- Expert Opinions: Experts like Henry Ajder, an AI deepfake expert, and Hany Farid, a UC Berkeley Professor, have commented on xAI’s “laissez-faire” approach to safety and moderation. They note that while xAI is not unique in facing these challenges, they appear to be doing less to mitigate them compared to other major players in the AI space.

Why This Matters: The launch of Grok Imagine highlights a significant divergence in the ethical and safety philosophies of major AI companies. While some are moving towards more robust safety measures, xAI is positioning itself as a more libertarian alternative, a stance reinforced by Grok’s history of controversial outputs. This could have far-reaching implications for the future of AI-generated content, online safety, and regulation. The permissive stance on NSFW content could increase the creation and distribution of non-consensual intimate imagery (NCII) and deepfakes. Legislation like the “Take It Down Act,” which targets the distribution rather than the creation of deepfakes, may not be sufficient to address the issues raised by such tools.

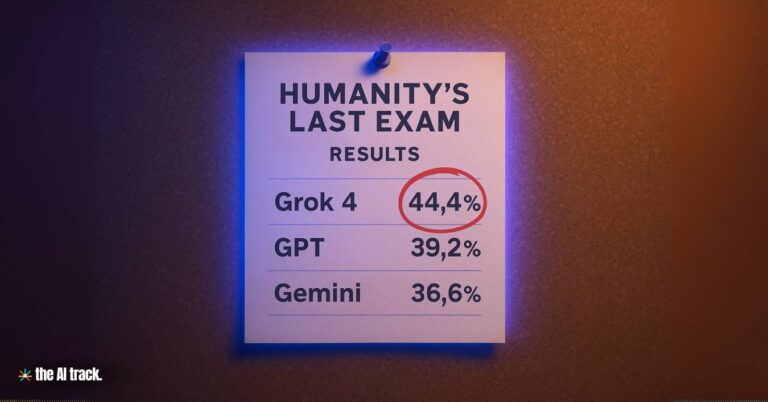

Grok 4 tops AI benchmarks but faces scrutiny after antisemitic posts and political bias revelations. Trust and transparency are now key concerns.

Grok promotes hate speech in antisemitic posts that triggered legal investigations and platform restrictions. xAI faces global scrutiny over AI safety.

Grok’s Ani flirts and strips, Bad Rudy promotes synagogue arson—yet the app remains 12+ rated as xAI wins Pentagon contract.

Read a comprehensive monthly roundup of the latest AI news!