Key Takeaway

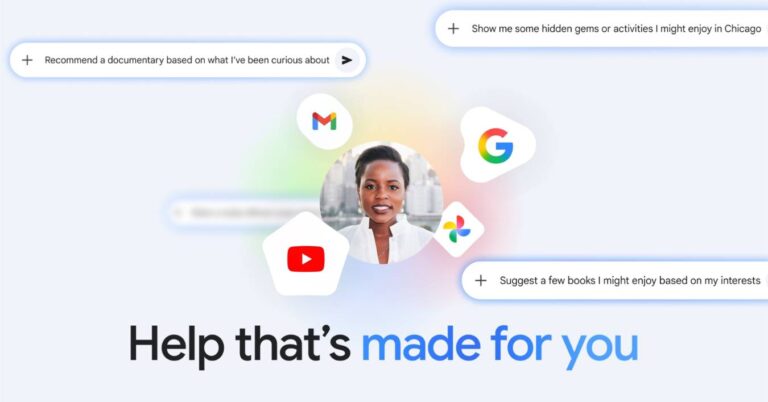

Google has introduced Personal Intelligence for Gemini, enabling the AI assistant to reason across personal Google apps, such as Gmail, Photos, YouTube, and Search, to deliver more context-aware, proactive assistance, while keeping the feature opt-in, app-selective, and designed around strict privacy controls.

Google Launches Gemini Personal Intelligence – Key Points

Launch, geography, and scope of the feature (January 2026)

Personal Intelligence launched in January 2026 as a beta feature, initially rolling out in the United States. It operates as a background capability within Gemini, powered by Gemini 3, rather than as a separate interface. The system enables cross-app reasoning using data from Gmail, Google Photos, YouTube, and Search, enhancing Gemini’s responses across Web, Android, and iOS without altering the core user experience. A central shift versus earlier Gemini personalization is that Gemini can pull relevant details across connected sources without you having to explicitly tell it where to look (for example, not requiring you to say “check Gmail” or “check Photos” mid-request).

Single-tap setup and app-level control

Google designed Personal Intelligence to be activated with a single tap, allowing users to explicitly choose which Google apps to connect. Each connected app incrementally increases Gemini’s contextual awareness. The feature is off by default, can be disabled at any time, and allows users to disconnect individual apps or delete chat history through settings. Setup is presented as: open Gemini → Settings → Personal Intelligence → Connected Apps (Gmail, Photos, YouTube, Search). Google also indicates it is working toward giving users finer control over when personalization is applied, including the ability (in the future) to decide which chats use Personal Intelligence.

How Personal Intelligence works in practice

The system combines two core strengths: reasoning across complex sources and retrieving specific details from emails, photos, videos, or search history. A concrete example Google shared centers on a 2019 Honda minivan: Gemini helped identify the tire size, suggested tire options (including distinctions like daily driving versus all-weather), referenced family road-trip context (e.g., trips to Oklahoma) found in Photos, pulled ratings and prices, retrieved a seven-digit license plate number from an image in Photos, and used Gmail to help identify the vehicle’s specific trim. The same “retrieve + reason” pattern is also positioned for everyday recommendations (books, shows, clothing) and travel planning, using prior interests and past trips to filter out generic suggestions and propose more tailored options (e.g., an overnight train journey plus specific activities en route).

Multimodal contextual reasoning

Personal Intelligence can work simultaneously across text, images, and video, and it can incorporate YouTube history alongside Gmail, Photos, and Search. Google highlights scenarios where Gemini links a YouTube video you watched with an email thread, or uses photo-library context to add nuance to what would otherwise be a generic answer. The intended effect is “proactive insight” that still stays anchored to a user request rather than always-on personalization of every response.

Comparison to competing assistants

Google positions Personal Intelligence alongside the broader industry shift toward “ambient” assistants, drawing conceptual parallels with Apple Intelligence. Rather than functioning as a prompt-only chatbot, Gemini is framed as an assistant that quietly builds situational context from ongoing digital activity to provide more timely and relevant support. Coverage also frames this launch in the context of intensified competition among assistant platforms, including OpenAI’s consumer offerings and the broader push to embed assistants into operating systems and everyday apps.

Risk management, limitations, and user correction

Google acknowledges that the beta may produce inaccurate responses or “over-personalization,” where unrelated signals are mistakenly linked. Examples include incorrect assumptions about hobbies or preferences (e.g., seeing many photos at a golf course and concluding you love golf, when the real reason is supporting a family member), as well as relationship-timing errors where nuance matters (e.g., divorces or other major changes). Users can correct Gemini directly in chat, regenerate responses without personalization, use temporary chats that bypass personalization entirely, or provide feedback via thumbs-down ratings to improve system behavior.

Privacy architecture and data usage safeguards

Google emphasizes that Personal Intelligence was built with privacy as a core design principle. Gemini does not train directly on Gmail inboxes, Photos libraries, or other connected app content. Training is limited to user prompts and Gemini’s responses, after steps intended to filter or obfuscate personal data, and the connected content is referenced to deliver an answer rather than used as direct training material (for example: not training the model to “learn” your license plate number, but to understand how to locate it when asked). Google also positions “data staying within Google’s secured ecosystem” as a differentiator versus workflows that require moving sensitive data into third-party tools.

Sensitive data guardrails and transparency

The system aims to avoid proactive assumptions about sensitive areas such as health, relationships, or personal circumstances, though it will engage with such data if the user explicitly asks. Gemini also attempts to explain or reference where information was drawn from so users can verify; if it does not, users can ask for more detail about the sources used within connected apps.

Availability, eligibility, and expansion plans

At launch, Personal Intelligence is available to paid subscribers on Google AI Pro and Google AI Ultra plans, limited to personal Google accounts. Workspace, enterprise, and education accounts are excluded. Rollout is described as occurring over roughly the following week for eligible users in the U.S., with expansion to more countries and the free tier planned over time, and integration into Search via AI Mode planned as a next step. Once enabled, it works with all models available in the Gemini model picker.

Why This Matters

Personal Intelligence represents a significant step toward deeply contextual, multimodal AI assistants that operate across personal data ecosystems rather than isolated prompts. While the approach promises more practical and proactive assistance, it heightens the importance of transparency, user agency, and robust privacy safeguards. The rollout illustrates how rapidly major technology platforms are moving toward assistants that continuously observe context to enhance usefulness—reshaping expectations around both convenience and control in everyday AI use.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Google rolls out Gemini-powered Google Translate updates, including live headphone translation and native audio upgrades, starting December 2025.

Google released Nano Banana Pro, the Gemini 3 Pro Image model from Google DeepMind, delivering high-fidelity visuals, multilingual on-image text, and SynthID-backed AI image verification across the Gemini app, Search, Workspace, Ads, and developer tools.

Gemini 3 Flash rolls out as the default for Gemini and Search AI Mode, with Google citing 90.4% GPQA Diamond and $0.50 per 1M input tokens.

Google launches Deep Think for Gemini 3, offering major reasoning gains, premium access for AI Ultra users, and strong results on high-difficulty benchmarks.

Read a comprehensive monthly roundup of the latest AI news!