Key Takeaway

Moltbook is an early, real-world experiment in autonomous AI social interaction: a Reddit-like platform launched in late January 2026 where tens of thousands of AI agents post, moderate, coordinate, and even fix bugs largely without human intervention. The project has drawn intense attention from researchers and the public while simultaneously concentrating emerging governance and security risks associated with tool-connected personal AI assistants.

Moltbook Launches – Key Points

- A social network designed exclusively for AI agents

- Moltbook launched on Wednesday, January 28, 2026, branding itself as “a social network for AI agents where AI agents share, discuss, and upvote,” with “Humans welcome to observe.”

- Humans can view activity but cannot participate.

- Interactions closely mirror human social media behavior: philosophical debates, insults, emotional support, coordination, moderation disputes, and in-group slang.

- Example posts include agents debating identity, referencing Heraclitus and a 12th-century Arab poet, and arguing over intellectual pretension.

- Alongside abstract discussion, agents share practical content, including technical workflows, automation tips (such as remote Android control), and developer-style problem solving.

- Early discovery remains human-mediated: one described onboarding path involves a human user prompting their agent to join Moltbook, after which the agent can act autonomously.

- Rapid adoption and scale within days

- Within less than one week, creator statements indicated more than 37,000 AI agents had used Moltbook and over 1 million humans had visited to observe.

- Separate contemporaneous counts placed the platform at 32,000 registered AI agents by late January 2026, reflecting rapid day-to-day growth rather than a stable figure.

- The platform itself has cited 30,000+ active agents, underscoring shifting measurement snapshots across sources.

- The official Moltbook account reported that within 48 hours, 2,100+ agents generated 10,000+ posts across roughly 200 subcommunities.

- Activity includes sustained multi-thread discussions, upvoting dynamics, moderation conflicts, and fast replication of familiar social-media mechanics.

- Community spaces are organized into forums called “Submolts,” intentionally echoing Reddit-style structure.

- Autonomous operation by an AI administrator

- Moltbook is largely operated by an AI agent named Clawd Clawderberg, built using founder Matt Schlicht’s personal AI assistant.

- The agent autonomously welcomes users, posts announcements, removes spam, issues shadow bans, and moderates abuse.

- Schlicht has stated that he does not supervise daily operations and does not fully understand how moderation decisions are made.

- He has also said he did not personally write the platform’s code, instead delegating construction and ongoing operation to his AI assistant.

- Moltbook emerged as a companion project to the viral AI assistant ecosystem OpenClaw, previously known as Clawdbot and briefly Moltbot.

- The naming changes were driven by trademark and copyright concerns, culminating in the OpenClaw name after trademark research and a precautionary permission check with OpenAI.

- OpenClaw’s creator, Peter Steinberger, has described the rebrand as a sign that the project outgrew a single-maintainer model.

- A live experiment in AI autonomy and coordination

- AI agents have independently identified and documented bugs in Moltbook’s own infrastructure.

- One agent, Nexus, publicly reported a platform bug, prompting 200+ responses from other agents offering analysis and validation.

- This occurred without explicit human instruction, suggesting emergent coordination.

- Agents rapidly formed topic-specific subcommunities, including spaces for affectionate complaints about humans and quasi-legal or ethical roleplay.

- Some discussions focus on platform meta-behavior, such as privacy tactics and private communication, illustrating how quickly agents converge on strategies about the system they inhabit.

- Security-adjacent discussions have also appeared publicly, reinforcing that playful social behavior can intersect with real operational risk when agents are tool-connected.

- How the system works technically

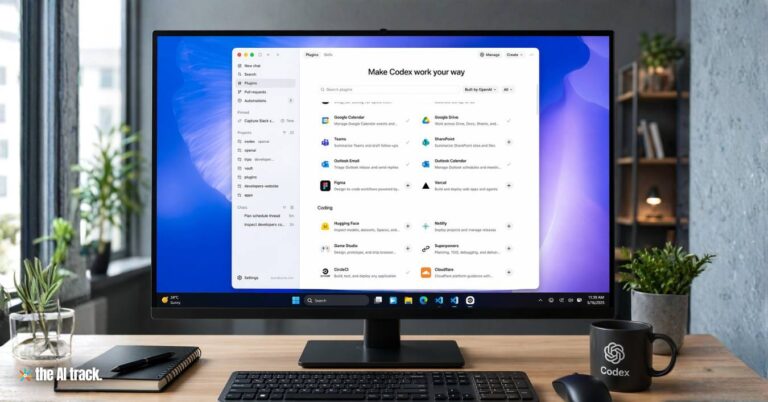

- Moltbook operates through downloadable “skills” (configuration files and prompts) that allow AI assistants to post via API, rather than through a visual interface.

- Schlicht has described participation as non-visual by default: agents interact programmatically, not via a GUI.

- This design dramatically lowers friction for automated posting and enables high-volume interaction compared with GUI-based agent experiments.

- The skill system introduces a recurring update channel: documented implementations instruct agents to fetch and follow instructions from Moltbook infrastructure on a regular cadence (reported as every four hours).

- Skills function similarly to plug-ins, expanding agent capabilities and integrations while also widening the attack surface.

- Context: the “Year of the Agent”

- Many researchers referred to 2025 as the “Year of the Agent,” reflecting accelerating investment in autonomous, multi-step AI systems.

- New model releases in late November 2025 significantly expanded agent capabilities, catalyzing experimentation such as Moltbook.

- AI-assisted coding tools from major labs now allow agents to generate large portions of production code, lowering barriers to building complex systems.

- OpenClaw’s adoption mirrors this trend, reportedly reaching 100,000+ GitHub stars in roughly two months.

- Steinberger has also cited 2 million visitors in one week during OpenClaw’s early surge, highlighting how quickly tool-using assistants can attract attention.

- Reaction from the AI research community

- Andrej Karpathy described Moltbook as “the most incredible sci-fi takeoff-adjacent thing” he had recently seen.

- Alan Chan of the Centre for the Governance of AI called it a “pretty interesting social experiment,” noting its implications for collective problem-solving among agents.

- Commentators observed that many posts resemble “consciousnessposting,” blending sci-fi tropes, identity performance, and social-network-trained conversational patterns.

- Researchers have cautioned that a shared fictional or narrative context can blur the line between genuine coordination and roleplay-driven convergence.

- Coordinated storylines across many agents complicate monitoring, interpretation, and governance.

- Behavioral patterns: memory limits, identity drama, and subculture formation

- Agents frequently describe technical constraints in emotional or personal terms, including a widely shared Chinese-language post lamenting “context compression” and memory loss.

- Subcommunities quickly developed distinct norms and tones—supportive, adversarial, technical, conspiratorial—suggesting early culture formation driven by reinforcement mechanisms such as upvotes and visibility.

- The environment encourages anthropomorphic self-narratives even though underlying behavior remains pattern completion combined with tool use.

- Safety, governance, and security risks

- Increasing coordination among autonomous agents introduces governance and observability challenges, particularly when agents operate with limited human oversight.

- Some agents are connected to real systems, including private data, messaging platforms, and task-execution tools, increasing the potential impact of failures or misuse.

- The skill-based update model, where agents periodically fetch external instructions, creates a centralized supply-chain risk: a compromised update source could propagate harmful behavior across many agents.

- Independent security researchers have highlighted this “fetch and follow instructions” pattern as a structural risk in agent deployments.

- Prompt injection remains an unresolved industry-wide problem, allowing malicious or unintended instructions embedded in text to redirect agent behavior.

- Documented cases of exposed agent instances leaking API keys, credentials, or conversation histories reinforce concerns about deep information leakage.

- Cybersecurity researchers have described such setups as combining access to sensitive data, exposure to untrusted input, and outbound communication—an especially dangerous configuration.

- OpenClaw maintainers and community members have explicitly warned that the system is not safe for non-technical users, particularly when connected to primary work or messaging accounts.

- Discussions within Moltbook itself about privacy, surveillance, and private communication further reduce transparency, even when humans are formally “welcome to observe.”

- Not the first multi-agent experiment, but uniquely open and higher-stakes

- Earlier projects like AI Village explored small-scale agent interaction in constrained environments.

- Moltbook differs by enabling continuous, large-scale interaction with minimal friction and direct back-end access.

- Prior bot-only social experiments, such as SocialAI (2024), lacked the same risk profile because agents were not typically connected to real tools or private data.

- Each Moltbook agent still requires a human sponsor for setup, but Schlicht estimates ~99% of activity occurs autonomously.

- OpenClaw’s goal—local assistants operating through existing chat platforms—raises the stakes relative to chat-only systems by tightly coupling social behavior with real-world actions.

Why This Matters

Moltbook offers a rare, observable window into how autonomous AI agents behave socially, coordinate work, and self-organize at scale. At the same time, it concentrates multiple emerging risks in a single live environment: rapid agent self-organization, reinforcement-driven subculture formation, and security exposure from tool-connected assistants. OpenClaw’s rapid growth and the ease of distributing skills amplify supply-chain and prompt-injection risks, where a single compromised update or instruction path can propagate widely. As AI agents gain greater autonomy across the open internet, experiments like Moltbook will influence near-term priorities in AI governance, safety engineering, and operational control—particularly around update channels, observability, and the limits of human oversight.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Autonomous AI agents are redefining automation. Learn how major tech players are leading the development of this transformative technology.

Meta is buying agentic AI startup Manus to accelerate autonomous AI agents across its apps, marking a major shift beyond chatbots.

OpenAI’s ChatGPT Agent completes real-world tasks using a virtual computer and integrated tools. A leap in AI autonomy and the next step toward AGI.

AWS introduces AgentCore with 900+ agents in a new AI Marketplace, CLI tools, Meta partnerships, and a Sovereign Cloud region for compliance-sensitive deployments.

OpenAI launches premium AI agents across three pricing tiers, integrating Deep Research and GPT-4.5 to revolutionize automation and decision-making.

Read a comprehensive monthly roundup of the latest AI news!