Key Takeaway

Microsoft has introduced the MAI AI Models as three in-house systems for speech transcription, voice generation, and image generation, expanding its multimodal lineup beyond text. The launch also marks a clearer move toward AI self-sufficiency as Microsoft builds competing model infrastructure while maintaining its OpenAI partnership.

Microsoft Launches MAI AI Models – Key Points

The Story

Microsoft AI announced three foundation models: MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2. Together, these MAI AI Models cover speech-to-text, synthetic voice generation, and image generation, and are available through Microsoft Foundry and MAI Playground. Microsoft is positioning them around practical enterprise use, lower costs, and tighter integration inside its own infrastructure. The launch reflects Microsoft’s broader push to reduce dependence on OpenAI after renegotiating partnership terms that had previously limited its ability to pursue advanced AI development independently, and it represents the first public model release from Mustafa Suleyman’s MAI Superintelligence team.

The Facts

Microsoft released three multimodal foundation models.

The MAI AI Models lineup includes MAI-Transcribe-1 for speech transcription, MAI-Voice-1 for voice generation, and MAI-Image-2 for image generation.

The launch deepens Microsoft’s move toward in-house AI independence.

Microsoft remains closely partnered with OpenAI, but the new MAI family is part of a broader effort to build proprietary multimodal systems and compete more directly with OpenAI, Google, and other model providers.

MAI-Transcribe-1 supports 25 languages and is positioned as the lead technical release.

Microsoft says the model converts speech into text across 25 languages, runs 2.5 times faster than its Azure Fast transcription service, and achieved a 3.8% average word error rate on the FLEURS benchmark.

Microsoft says MAI-Transcribe-1 beats several rival systems on multilingual benchmark results.

According to Microsoft, it outperformed Whisper-large-v3 in all 25 supported languages, Gemini 3.1 Flash in 22 of 25, and ElevenLabs Scribe v2 plus GPT-Transcribe in 15 of 25 each.

MAI-Transcribe-1 adds more specific product and deployment details.

Microsoft says it uses a transformer-based text decoder with a bi-directional audio encoder, accepts MP3, WAV, and FLAC files up to 200MB, and has diarization, contextual biasing, and streaming listed as coming soon.

MAI-Voice-1 is built for rapid text-to-speech generation.

Microsoft says the model can generate 60 seconds of natural-sounding audio in one second on a single GPU, preserves speaker identity across longer content, and supports custom voice creation from only a few seconds of sample audio.

The speech models give Microsoft a fuller in-house voice stack.

Combined with a language model, they can support captioning, meeting transcription, voice agents, and synthetic voice output on Microsoft infrastructure. Microsoft says MAI-Transcribe-1 is already being tested in Copilot Voice and Microsoft Teams for conversation transcription.

MAI-Image-2 is Microsoft’s second-generation in-house image model and is gaining broader rollout.

Microsoft says the model improves speed and image realism over the previous version, delivers at least 2x faster generation times on Foundry and Copilot, and is rolling out across Bing and PowerPoint.

MAI-Image-2 had already shown early competitive traction before this broader push.

The model had reached number three on the Arena.ai text-to-image leaderboard in March, behind Gemini 3.1 Flash and GPT Image 1.5. Microsoft also says WPP is among the first enterprise partners using it at scale.

Microsoft is pitching the models on both price and efficiency.

The company says the models are meant to be cheaper than rival offerings, and Suleyman said the transcription system can be delivered with half the GPUs used by leading competitors.

Microsoft disclosed starting prices for the new models.

MAI-Transcribe-1 starts at $0.36 per hour of audio. MAI-Voice-1 starts at $22 per 1 million characters. MAI-Image-2 starts at $5 per 1 million text-input tokens and $33 per 1 million image-output tokens.

The models are the first public output of Microsoft’s MAI Superintelligence team.

The group is led by Mustafa Suleyman, was formed in late 2025, and was created to train frontier models using Microsoft’s own data and compute. Suleyman has also said Microsoft plans to build state-of-the-art models across all modalities, including a frontier large language model.

How to Access / Pricing

The MAI AI Models are available through Microsoft Foundry and MAI Playground for enterprise use. Microsoft has also said MAI-Image-2 is rolling out across Bing and PowerPoint. Starting prices are $0.36 per hour for transcription, $22 per 1 million characters for voice generation, and $5 per 1 million text-input tokens plus $33 per 1 million image-output tokens for image generation.

Use Cases

The clearest use cases named in the source are video captioning, meeting transcription, conversation transcription, voice agents, synthetic voice creation, and image generation. The speech models also give Microsoft the pieces for a full voice pipeline on its own infrastructure when paired with a language model.

Background / Context

Microsoft is developing its own models while maintaining a multi-year OpenAI partnership. The company has invested more than $13 billion in OpenAI, continues to host OpenAI models in Foundry, and uses OpenAI technology across products such as Copilot. At the same time, Microsoft renegotiated its OpenAI agreement in 2025 to gain more freedom to pursue advanced AI systems independently, and these model launches are an early visible result of that shift. Suleyman has said the revised agreement gave Microsoft the freedom to pursue its own superintelligence efforts while preserving the OpenAI relationship through 2032.

Why This Matters

The MAI AI Models show Microsoft moving from being mainly a distributor of OpenAI technology to becoming a more direct model builder across key multimodal categories. For developers and enterprise buyers, that creates a stronger Microsoft-native alternative for transcription, voice, and image workflows inside the same platform where OpenAI and other third-party models are also sold.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Microsoft and OpenAI signed a non-binding MOU while OpenAI’s nonprofit parent gains a $100B equity stake. Regulators are reviewing restructuring and access terms.

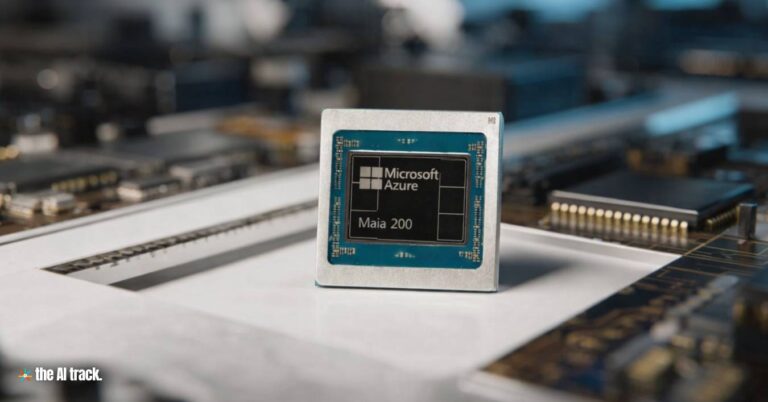

Microsoft unveiled Maia 200: 140B+ transistors, 216GB HBM3e, 30% better performance per dollar, and benchmarks vs Trainium and TPU.

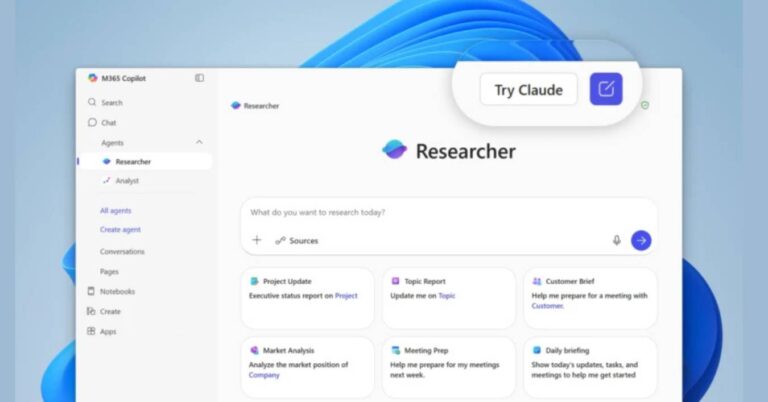

Microsoft Adds Anthropic for Researcher and Copilot Studio, integrating Claude Sonnet 4 and Opus 4.1 while OpenAI remains default in Microsoft 365 Copilot.

The Anthropic Defense Department lawsuit widened after Microsoft, rival AI researchers, retired military leaders, and rights groups backed the case.

Meta and AMD announce a 60 billion dollar, five-year AI infrastructure partnership covering up to 6 gigawatts of customized GPUs starting in 2H 2026.

Read a comprehensive monthly roundup of the latest AI news!