Key Takeaway

Meta and AMD have signed a five-year, 60 billion dollar agreement covering up to 6 gigawatts of customized GPUs, alongside a 10 percent equity stake, marking one of the largest AI infrastructure partnerships to date.

Meta and AMD Deal – Key Points

The Story

Meta and AMD have formalized a multi-year, multi-generation agreement under which Meta will purchase 60 billion dollars worth of artificial intelligence chips over five years. The deal includes a 10 percent stake in AMD and a commitment to deploy up to 6 gigawatts of AMD Instinct GPUs. The first 1 gigawatt deployment, powered by a custom MI450-based GPU optimized for Meta workloads, is expected to begin shipping in the second half of 2026. The agreement positions Meta and AMD at the center of a broader push toward diversified, hyperscale AI infrastructure.

The Facts

60 billion dollar, five-year agreement and equity stake

Under the Meta and AMD partnership, Meta will purchase 60 billion dollars in AI chips over five years and acquire a 10 percent stake in AMD as part of a long-term strategic alignment.

Up to 6 gigawatts of customized Instinct GPUs

Meta and AMD signed a definitive agreement to deploy up to 6 gigawatts of AMD Instinct GPUs across multiple generations, using customized GPU solutions tailored to Meta workloads.

First 1 gigawatt deployment in 2H 2026

Shipments supporting the initial 1 gigawatt are scheduled for the second half of 2026, powered by a custom AMD Instinct GPU based on the MI450 architecture.

Helios rack-scale architecture

The infrastructure will run on AMD Helios rack-scale architecture, developed jointly by AMD and Meta through the Open Compute Project to support scalable AI deployments at rack level.

Expanded CPU collaboration

Meta will be a lead customer for 6th Gen AMD EPYC CPUs, codenamed “Venice,” as well as a next-generation EPYC processor called “Verano.” The first deployment pairs MI450-based GPUs with 6th Gen EPYC CPUs running ROCm software.

Aligned silicon, systems and software roadmaps

The partnership synchronizes GPU and CPU silicon, system design and software stacks to optimize performance-per-watt and total infrastructure efficiency.

Performance-based warrant structure

AMD has issued Meta a performance-based warrant for up to 160 million AMD shares. Vesting is tied to shipment milestones, from the first 1 gigawatt through 6 gigawatts, as well as stock price and technical benchmarks.

Portfolio silicon strategy

Analysts describe the Meta and AMD agreement as part of a multivendor approach: Nvidia for frontier training, AMD for expanding inference, Meta in-house MTIA chips for recommendation systems, and potential TPU usage from Google for Llama-related workloads.

Data center expansion at scale

Meta is building a major data center campus in Louisiana estimated to cost 27 billion dollars, reinforcing its infrastructure-first strategy as AI workloads grow.

Scale economics and efficiency impact

With more than 3.5 billion daily active users and hundreds of billions of AI interactions daily, even a 10 percent improvement in performance-per-watt for a given workload can translate into millions of dollars in annual savings.

Investor outlook and AI roadmap

Morningstar maintains a USD 850 per share fair value estimate for Meta and expects clearer returns on AI investments through 2026, particularly via the ad business. It also describes Meta’s upcoming large language model as a significant upgrade to Llama 4.

AI market volatility backdrop

The Meta and AMD agreement comes amid market volatility linked to agentic AI tools. On the same day, Anthropic announced deeper enterprise integrations for Claude Cowork, intensifying competition across enterprise AI platforms.

Background / Context

Meta has sharply increased AI investment, including aggressive talent recruitment and infrastructure expansion.

AMD is Nvidia’s primary US-based competitor in AI accelerators and has positioned its Instinct GPUs and EPYC CPUs as full-stack AI solutions.

Nvidia remains the dominant supplier of AI GPUs, but supply constraints and competitive risk have accelerated hyperscaler interest in diversified silicon stacks.

Why This Matters

Meta and AMD are effectively codifying a hyperscale, multivendor AI compute model. For users, that translates into faster AI feature rollouts, more resilient infrastructure, and potential long-term cost efficiencies as AI usage scales into the hundreds of billions of daily interactions.

This article was drafted with the assistance of generative AI. All facts and details were reviewed and confirmed by an editor prior to publication.

Nvidia and AMD will give 15% of China chip revenues to the US for export licences in an unprecedented deal facing bipartisan legal and security criticism.

OpenAI and AMD agreed a 6GW AI chip deal starting 2026, with OpenAI gaining option for ~10% AMD stake; AMD shares surged over 34%.

Explore the vital role of AI chips in driving the AI revolution, from semiconductors to processors: key players, market dynamics, and future implications.

All you need to know about the critical components of AI infrastructure, hardware, software, and networking, that are essential for supporting AI workloads.

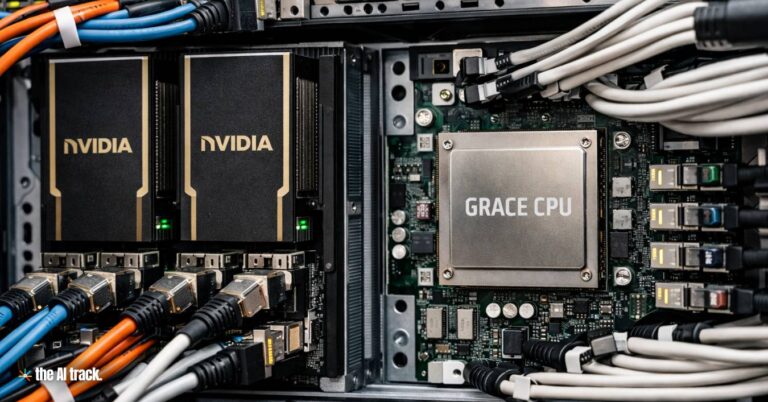

Meta – Nvidia deal scales to millions of Nvidia GPUs and adds standalone Grace CPUs, plus confidential computing designed for WhatsApp AI processing.

Meta’s $14.8B deal with Scale AI secures key data infrastructure, elevates Alexandr Wang, and raises antitrust concerns over market consolidation.

Read a comprehensive monthly roundup of the latest AI news!