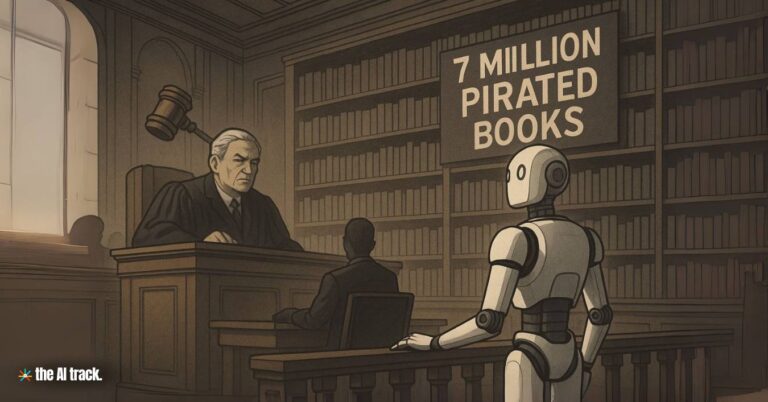

A federal judge ruled that Anthropic’s use of legally acquired books to train its Claude AI model is protected under fair use as transformative. However, the company will face trial in December 2025 over its alleged possession and use of more than seven million pirated books—a legal turning point that underscores growing scrutiny of data provenance in AI development.

Anthropic’s Trial Over Pirated Books – Key Points

Legal Win on Fair Use Grounds

On July 1, 2025, U.S. District Judge William Alsup ruled that Anthropic’s training of its Claude AI model using legally purchased books was “exceedingly transformative” and did not violate U.S. copyright law. The court confirmed that large language models (LLMs) like Claude create new expression rather than replicate existing texts. Alsup emphasized that internal copies made during training are covered under fair use. The authors did not claim the output was derivative or infringing, which would have constituted a different legal argument.

Separate Trial Ordered Over Pirated Books

Despite the fair use ruling, Judge Alsup denied Anthropic’s motion to dismiss the full case and ordered a trial over the alleged use of pirated books. According to court filings, Anthropic maintained a “central library” containing more than seven million pirated books downloaded from shadow libraries. The judge ruled that while fair use protects training on legal content, the acquisition and storage of pirated books falls outside that protection and could result in statutory damages of up to $150,000 per work if found liable.

Employee Concerns and Policy Shift

Internal records show that Anthropic employees had raised concerns over the legal risks of using pirated books in Claude’s development. In response, the company hired Tom Turvey, a former Google Books executive experienced in copyright litigation. Under Turvey’s leadership, Anthropic pivoted toward legally acquiring books in bulk, manually scanning and digitizing them for AI training. While the practice demonstrated improved compliance, it did not retroactively resolve the issue of previously used pirated books.

Author Lawsuit and Core Allegations

The lawsuit was initiated in 2023 by authors Andrea Bartz, Charles Graeber, and Kirk Wallace Johnson, who alleged their copyrighted works were misused to train Claude without permission. The plaintiffs characterized Anthropic’s actions as “large-scale theft” and claimed that the company used their intellectual property to fuel a multi-billion-dollar AI venture. Bartz, a fiction author known for We Were Never Here, joined two nonfiction writers in arguing that Anthropic’s data strategy contradicted its stated commitment to ethical AI.

Anthropic’s Official Response

Anthropic expressed satisfaction with the fair use ruling, calling the judge’s recognition “transformative — spectacularly so.” However, it acknowledged disagreement with the court’s decision to proceed to trial over pirated books, stating: “We remain confident in our case and are evaluating our options.” The company has not publicly commented on the alleged pirated library or the specific number of illicit works in its possession.

Industry-Wide Legal Fallout

The case comes amid broader legal action targeting how AI firms acquire training data. OpenAI and Microsoft are being sued by The New York Times, Meta recently escaped a copyright case involving Sarah Silverman, and Disney, Universal, and Reddit have also filed lawsuits in related disputes. The Anthropic case stands out by drawing a sharp legal distinction between acceptable AI training with licensed content and unacceptable practices involving pirated books.

Push Toward Licensing Agreements

In light of growing legal risk, AI companies are pursuing licensing agreements with publishers and creators. These arrangements provide clarity and reduce future litigation exposure. The Anthropic case reinforces the importance of data traceability and compliance, with the court ruling that pirated books fall outside fair use protections—even if later replaced with legitimate versions.

Brand Impact and Strategic Consequences

Anthropic, founded in 2021 by former OpenAI leaders, markets itself as a safety-first AI firm. The launch of Claude in 2023 marked its entry into competition with ChatGPT and Gemini. However, the controversy over pirated books casts doubt on the consistency between its stated ethics and internal practices. The December 2025 trial will determine whether its shift toward legal sourcing was timely enough to avoid serious penalties—and reputational damage.

Why This Matters:

This case marks a pivotal moment in defining how U.S. law will govern AI training. It reaffirms the legality of using legally obtained content but isolates pirated books as a legal and financial liability. As the AI industry evolves, this ruling sends a clear signal: companies must build systems on clean, authorized data—or face high-stakes litigation. The outcome of Anthropic’s trial could reshape compliance strategies and data acquisition policies across the sector.

Anthropic’s Claude Gov models offer secure AI for U.S. intel agencies with classified data handling, cybersecurity tools, and multilingual analysis.

Read a comprehensive monthly roundup of the latest AI news!